Daily Edition

The expanded edition keeps the full analyst notes, paper breakdowns, geopolitical framing, and the complete feed selected into this run.

Topic of the day.

A dedicated daily topic chosen from the strongest signals in the run, with TL;DR, why-now framing, and a fuller analyst read.

Industrial Code Models: InCoder-32B and Hardware-Aware Programming

TL;DR: InCoder-32B is a 32B-parameter code foundation model trained on industrial data with extended context and execution verification, achieving strong performance on general code tasks and establishing open-source baselines for hardware-aware programming.

Why now: As AI coding assistants move into industrial settings like chip design and embedded systems, models must reason about hardware semantics and strict resource constraints, which general-purpose LLMs struggle with.

InCoder-32B combines general code pre‑training with curated industrial annealing and progressive context extension to 128K tokens. Execution‑grounded verification post‑training improves reliability for hardware‑aware tasks. Evaluation on 14 general and 9 industrial benchmarks shows competitive general performance and strong industrial baselines. The model’s open‑source release enables community‑driven advancement in domains such as GPU kernel optimization and compiler design

- AI News: OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose points to OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose matters because it signals momentum in agent, agents,...

- AI News: BMW puts humanoid robots to work in Germany–and Europe’s factories are watching points to BMW puts humanoid robots to work in Germany–and Europe’s factories are watching matters because it affects the...

- The Decoder: Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence points to Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as...

- OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose (AI News | 03/16/2026)

- BMW puts humanoid robots to work in Germany–and Europe’s factories are watching (AI News | 03/13/2026)

- Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence (The Decoder | 03/16/2026)

Policy, chips, capital, and power.

Industrial strategy, compute supply, export controls, and big-company positioning shaping the AI balance of power.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says MIT Technology Review

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: MIT Tech Review AI published or updated this item on 03/17/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

BMW puts humanoid robots to work in Germany–and Europe’s factories are watching

Europe’s factory floors have a new kind of colleague. BMW Group has deployed humanoid robots in manufacturing in Germany for the first time, launching a pilot project at its Leipzig plant with AEON–a wheeled humanoid built by Hexagon Robotics. It is the first automotive...

BMW puts humanoid robots to work in Germany–and Europe’s factories are watching matters because it affects the policy, supply-chain, or security constraints around AI development, especially across europe, robotics.

- Primary signals: europe, robotics.

- Source context: AI News published or updated this item on 03/13/2026.

Product, model, and platform movement.

Software, model, deployment, and competitive stories with the strongest operator and market signal in this edition.

OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose

When OpenAI launched Frontier in February, the announcement was described as a platform for enterprise AI agents. What it actually signalled was a challenge to the revenue architecture underpinning the software industry. Frontier is designed to act as a semantic layer in an...

OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose matters because it signals momentum in agent, agents, frontier and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, frontier.

- Source context: AI News published or updated this item on 03/16/2026.

Trustpilot partners with AI companies as traditional search declines

Trustpilot is reported to be pursuing partnerships with large eCommerce companies as AI-driven shopping gains traction. In an interview with Bloomberg News [paywall], chief executive Adrian Blair said that AI agents acting on behalf of consumers require lots of information...

Trustpilot partners with AI companies as traditional search declines matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/17/2026.

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents MarkTechPost

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents matters because it signals momentum in agent, agents, llm and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, llm.

- Source context: MarkTechPost published or updated this item on 03/10/2026.

NTT DATA and NVIDIA bring enterprise AI factories to production scale

NTT DATA has announced an initiative to deliver NVIDIA-powered platforms designed to give organisations a repeatable, production-ready model for scaling AI. The offering integrates NVIDIA’s GPU-accelerated computing and high-performance networking with NVIDIA AI Enterprise...

NTT DATA and NVIDIA bring enterprise AI factories to production scale matters because it signals momentum in agent, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, model.

- Source context: AI News published or updated this item on 03/16/2026.

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 Turing Post

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Turing Post published or updated this item on 03/17/2026.

Differentiated source coverage.

Stories drawn from research blogs, first-party lab posts, practitioner newsletters, and selected technical outlets so the edition does not mirror the same headline across every source.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

Nemotron 3 Nano 4B: A Compact Hybrid Model for Efficient Local AI

A Blog post by NVIDIA on Hugging Face

Nemotron 3 Nano 4B: A Compact Hybrid Model for Efficient Local AI matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Why Codex Security Doesn’t Include a SAST Report

Why Codex Security Doesn’t Include a SAST Report OpenAI

Why Codex Security Doesn’t Include a SAST Report matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security.

- Primary signals: security.

- Source context: OpenAI Research published or updated this item on 03/16/2026.

Measuring AI agent autonomy in practice

Measuring AI agent autonomy in practice Anthropic

Measuring AI agent autonomy in practice matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Anthropic Research published or updated this item on 02/18/2026.

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents MarkTechPost

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/05/2026.

US Treasury publishes AI risk Guidebook for financial institutions

The US Treasury has published several documents designed for the US financial services sector that suggest a structured approach to managing AI risks in operations and policy (see subheading ‘Resources and Downloads’ towards the bottom of the link). The CRI Financial Services...

US Treasury publishes AI risk Guidebook for financial institutions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across policy.

- Primary signals: policy.

- Source context: AI News published or updated this item on 03/16/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

Where OpenAI’s technology could show up in Iran

Where OpenAI’s technology could show up in Iran MIT Technology Review

Where OpenAI’s technology could show up in Iran matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/16/2026.

Method, limitations, and results.

Paper summaries, methodology notes, limitations, and deep-dive bullets for the research items selected into the digest.

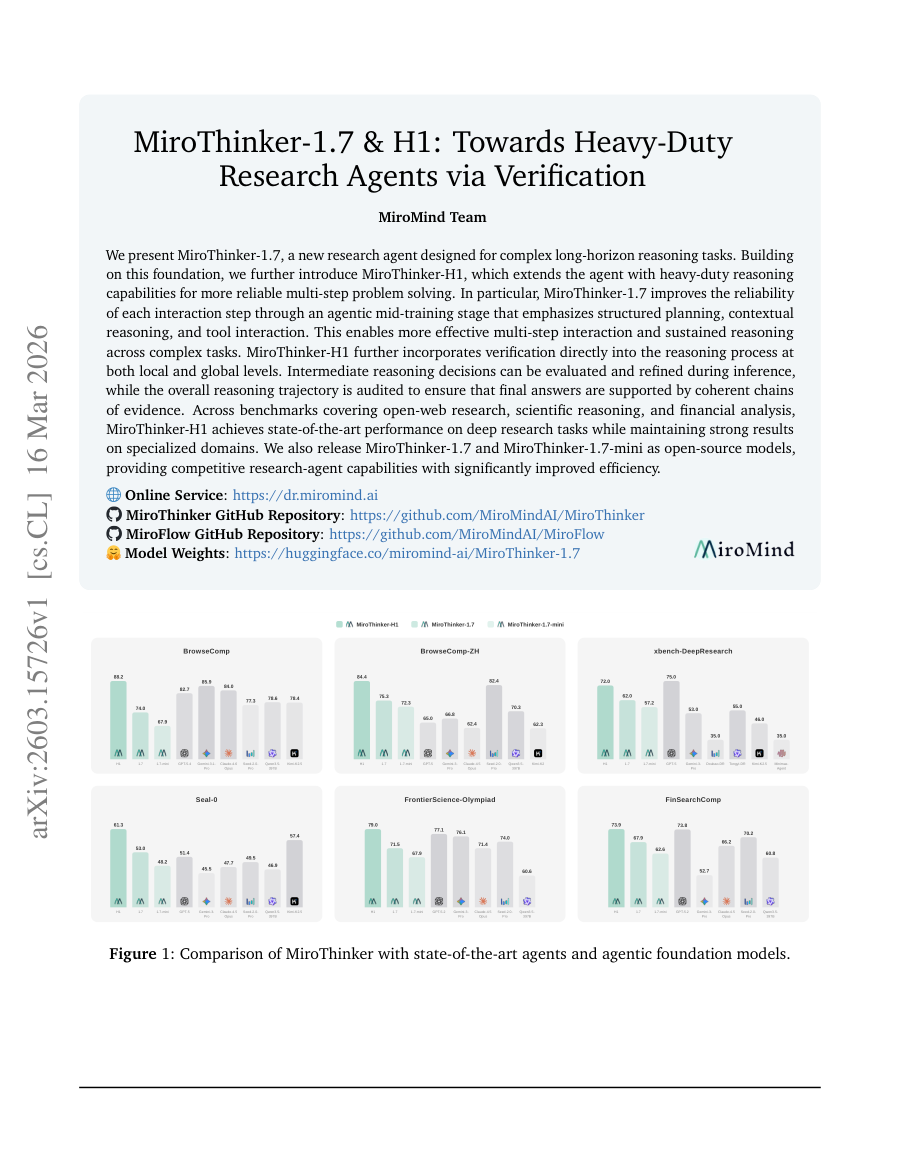

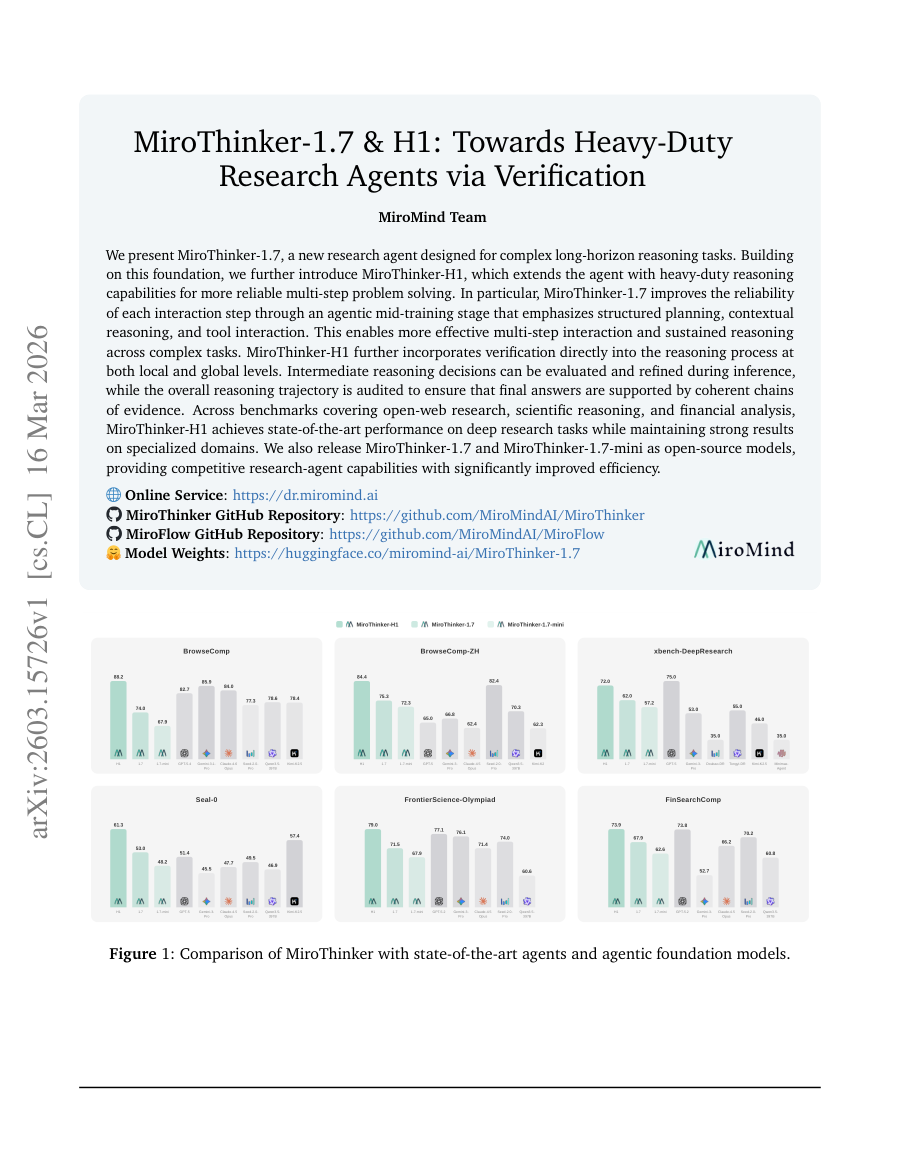

MiroThinker-1.7 & H1: Towards Heavy-Duty Research Agents via Verification

TL;DR: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local...

MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for more reliable multi-step problem...

MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for more reliable...

We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for...

- Method signal: We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

- Evidence to watch: In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification...

- Approach: We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

- Result signal: In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

- Community traction: Hugging Face Papers shows 53 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

InCoder-32B: Code Foundation Model for Industrial Scenarios

TL;DR: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks. Recent code large language models have achieved remarkable progress on general...

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded systems, compiler optimization, and 3D modeling.

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Method signal: To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded systems, compiler optimization, and...

- Evidence to watch: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Approach: To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded...

- Result signal: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Community traction: Hugging Face Papers shows 74 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

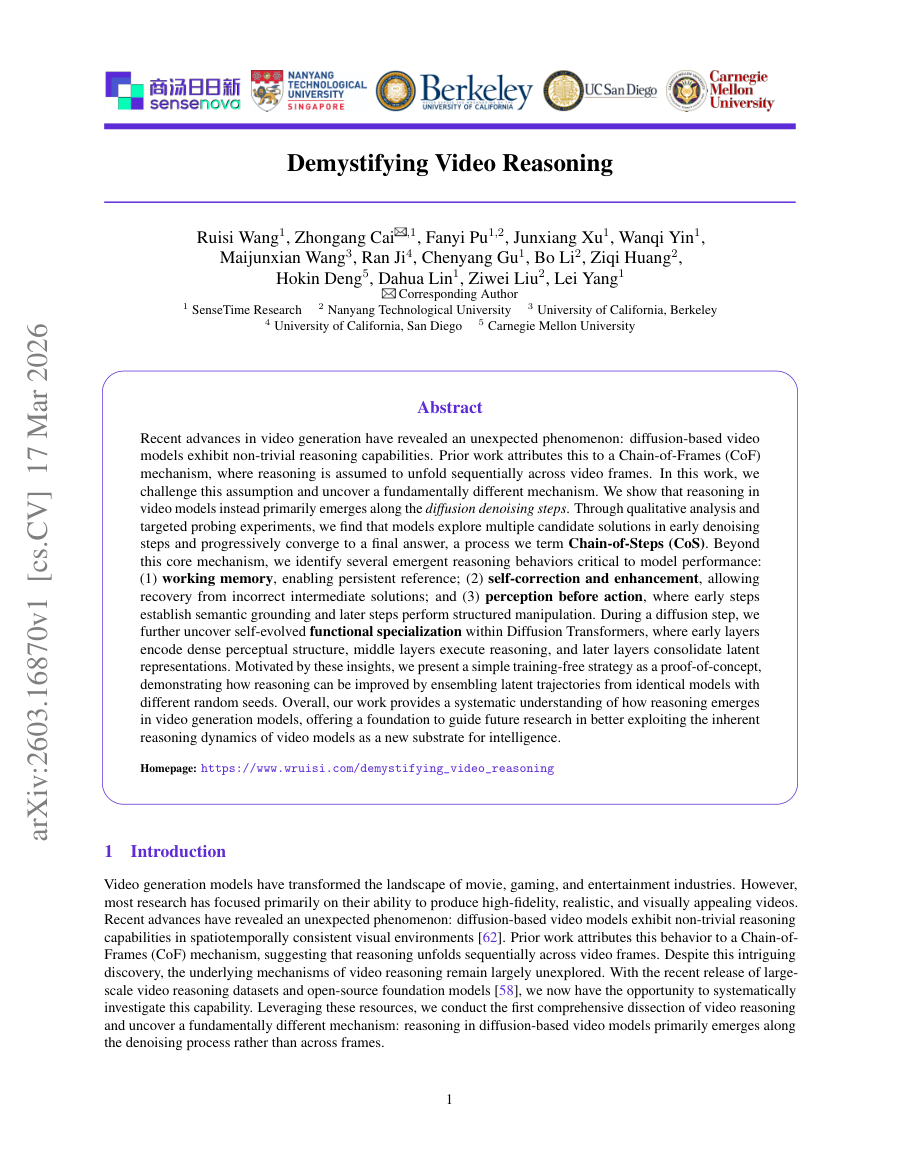

Demystifing Video Reasoning

TL;DR: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within...

Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer layers. Recent advances in video...

In this work, we challenge this assumption and uncover a fundamentally different mechanism.

Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with different random seeds.

Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer layers.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: In this work, we challenge this assumption and uncover a fundamentally different mechanism.

- Method signal: Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with different random seeds.

- Evidence to watch: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer...

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: In this work, we challenge this assumption and uncover a fundamentally different mechanism.

- Approach: Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with...

- Result signal: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and...

- Community traction: Hugging Face Papers shows 47 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

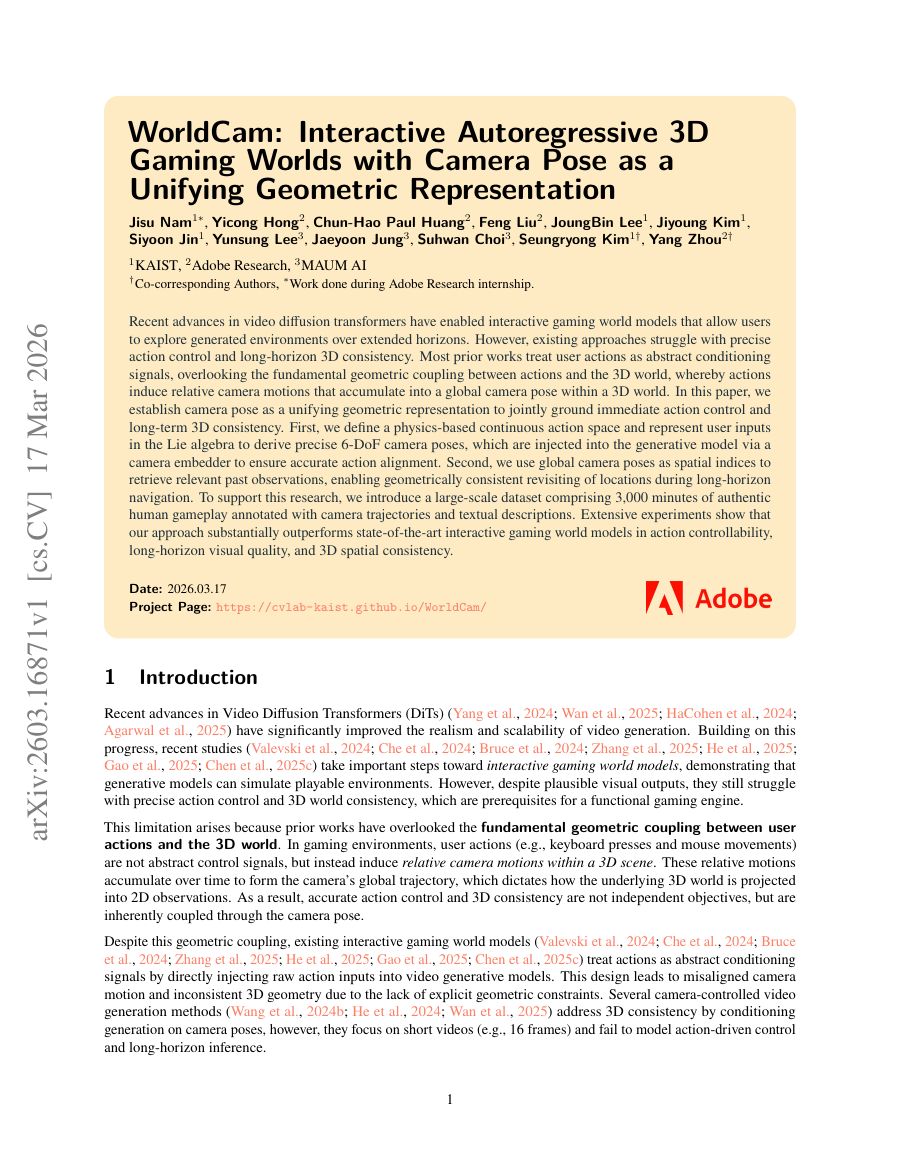

WorldCam: Interactive Autoregressive 3D Gaming Worlds with Camera Pose as a Unifying Geometric Representation

TL;DR: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric...

Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding. Recent advances in video diffusion transformers...

Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding.

In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding.

- Method signal: In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

- Evidence to watch: Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and...

- Approach: In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

- Result signal: Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

- Community traction: Hugging Face Papers shows 36 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

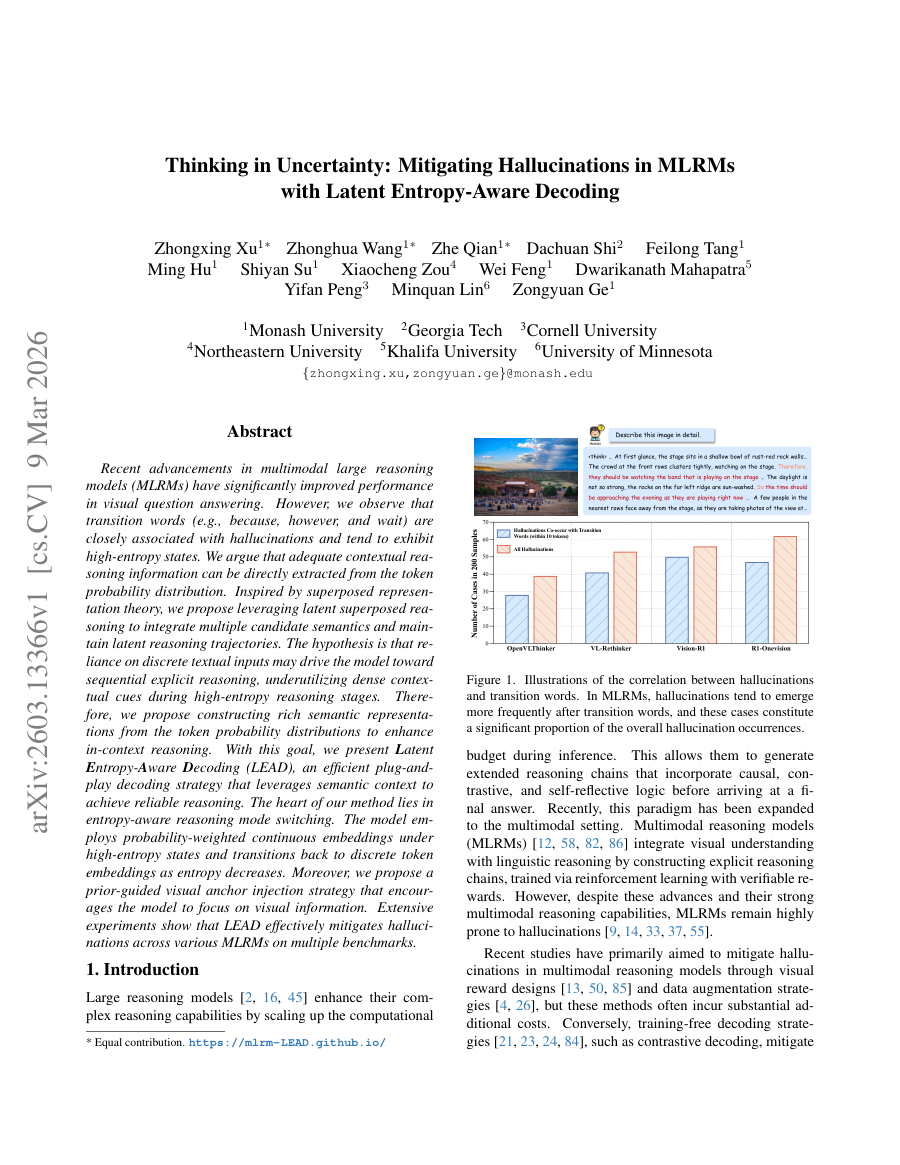

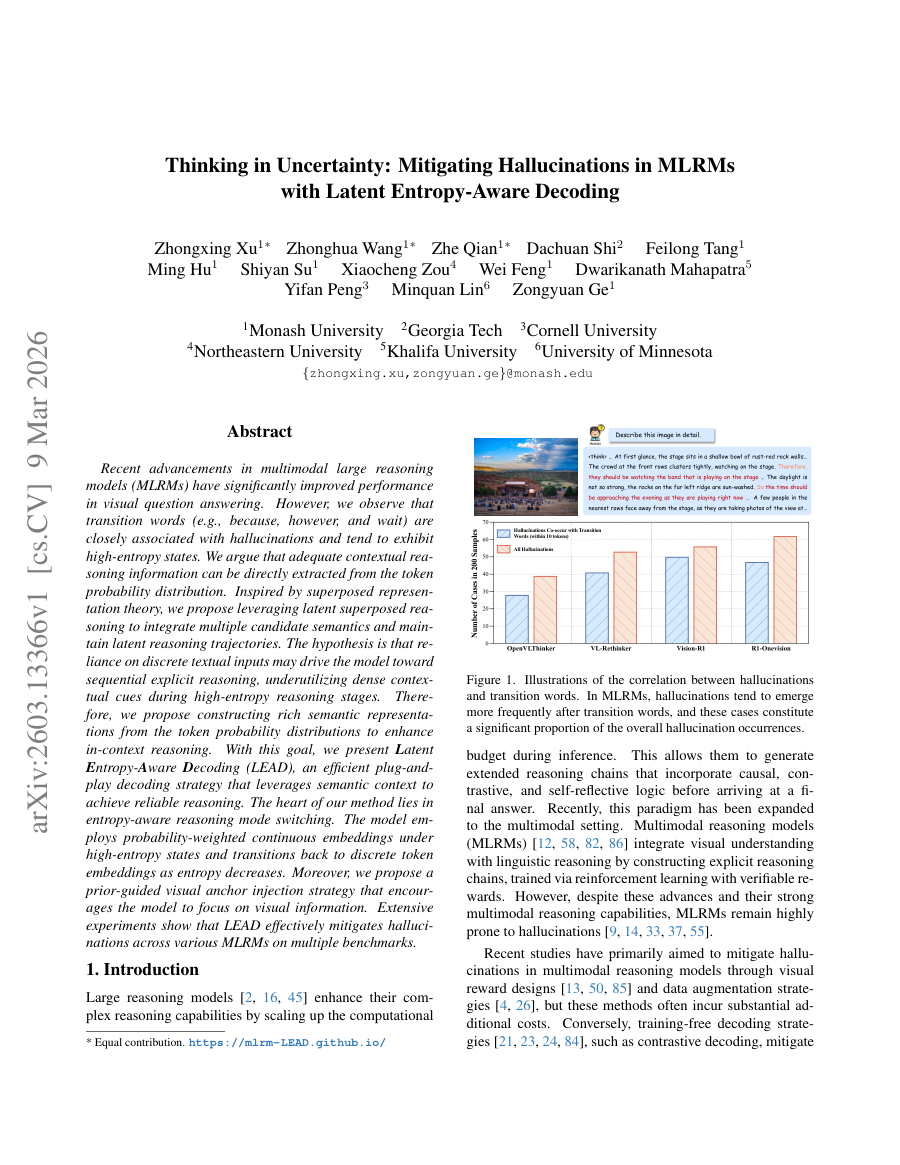

Thinking in Uncertainty: Mitigating Hallucinations in MLRMs with Latent Entropy-Aware Decoding

TL;DR: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering. However, we observe that transition words (e.g., because, however, and wait) are closely associated with hallucinations and tend to exhibit...

With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

- Method signal: Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

- Evidence to watch: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

- Approach: Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

- Result signal: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

- Community traction: Hugging Face Papers shows 34 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Everything selected into the run.

The complete analyzed stream for the issue, useful when you want to scan the entire run instead of only the curated front page.

OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose

When OpenAI launched Frontier in February, the announcement was described as a platform for enterprise AI agents. What it actually signalled was a challenge to the revenue architecture underpinning the software industry. Frontier is designed to act as a semantic layer in an...

OpenAI’s Frontier puts AI agents in a fight SaaS can’t afford to lose matters because it signals momentum in agent, agents, frontier and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, frontier.

- Source context: AI News published or updated this item on 03/16/2026.

Trustpilot partners with AI companies as traditional search declines

Trustpilot is reported to be pursuing partnerships with large eCommerce companies as AI-driven shopping gains traction. In an interview with Bloomberg News [paywall], chief executive Adrian Blair said that AI agents acting on behalf of consumers require lots of information...

Trustpilot partners with AI companies as traditional search declines matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/17/2026.

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents MarkTechPost

NVIDIA AI Releases Nemotron-Terminal: A Systematic Data Engineering Pipeline for Scaling LLM Terminal Agents matters because it signals momentum in agent, agents, llm and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, llm.

- Source context: MarkTechPost published or updated this item on 03/10/2026.

NTT DATA and NVIDIA bring enterprise AI factories to production scale

NTT DATA has announced an initiative to deliver NVIDIA-powered platforms designed to give organisations a repeatable, production-ready model for scaling AI. The offering integrates NVIDIA’s GPU-accelerated computing and high-performance networking with NVIDIA AI Enterprise...

NTT DATA and NVIDIA bring enterprise AI factories to production scale matters because it signals momentum in agent, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, model.

- Source context: AI News published or updated this item on 03/16/2026.

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 Turing Post

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Turing Post published or updated this item on 03/17/2026.

Introducing GPT-5.4 mini and nano

Introducing GPT-5.4 mini and nano OpenAI

Introducing GPT-5.4 mini and nano matters because it signals momentum in gpt and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: gpt.

- Source context: OpenAI Research published or updated this item on 03/17/2026.

Nemotron 3 Nano 4B: A Compact Hybrid Model for Efficient Local AI

A Blog post by NVIDIA on Hugging Face

Nemotron 3 Nano 4B: A Compact Hybrid Model for Efficient Local AI matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

7 Emerging Memory Architectures for AI Agents

7 Emerging Memory Architectures for AI Agents Turing Post

7 Emerging Memory Architectures for AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Turing Post published or updated this item on 03/15/2026.

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents MarkTechPost

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/05/2026.

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning MarkTechPost

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning matters because it signals momentum in llm, reasoning and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, reasoning.

- Source context: MarkTechPost published or updated this item on 03/09/2026.

Google AI Introduces Gemini Embedding 2: A Multimodal Embedding Model that Lets Your Bring Text, Images, Video, Audio, and Docs into the Embedding Space

Google AI Introduces Gemini Embedding 2: A Multimodal Embedding Model that Lets Your Bring Text, Images, Video, Audio, and Docs into the Embedding Space MarkTechPost

Google AI Introduces Gemini Embedding 2: A Multimodal Embedding Model that Lets Your Bring Text, Images, Video, Audio, and Docs into the Embedding Space matters because it signals momentum in model, multimodal and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model, multimodal.

- Source context: MarkTechPost published or updated this item on 03/11/2026.

How multi-agent AI economics influence business automation

Managing the economics of multi-agent AI now dictates the financial viability of modern business automation workflows. Organisations progressing past standard chat interfaces into multi-agent applications face two primary constraints. The first issue is the thinking tax;...

How multi-agent AI economics influence business automation matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/12/2026.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

Equipping workers with insights about compensation

Equipping workers with insights about compensation OpenAI

Equipping workers with insights about compensation matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/17/2026.

Goldman Sachs sees AI investment shift to data centres

Artificial intelligence investment is entering a more selective phase as companies and investors look beyond early excitement and focus on the data centre infrastructure required to run AI systems. Recent analysis from Goldman Sachs suggests the market is moving toward what...

Goldman Sachs sees AI investment shift to data centres matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/17/2026.

Marktechpost AI

Marktechpost AI MarkTechPost

Marktechpost AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MarkTechPost published or updated this item on 03/17/2026.

Measuring AI agent autonomy in practice

Measuring AI agent autonomy in practice Anthropic

Measuring AI agent autonomy in practice matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Anthropic Research published or updated this item on 02/18/2026.

How exhaustive is the persona selection model?

How exhaustive is the persona selection model? Anthropic

How exhaustive is the persona selection model? matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

An update on our model deprecation commitments for Claude Opus 3

An update on our model deprecation commitments for Claude Opus 3 Anthropic

An update on our model deprecation commitments for Claude Opus 3 matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/25/2026.

Top 10: LLM Fine Tuning Tools

Top 10: LLM Fine Tuning Tools AI Magazine

Top 10: LLM Fine Tuning Tools matters because it signals momentum in llm and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm.

- Source context: AI Magazine published or updated this item on 02/25/2026.

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship Turing Post

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Turing Post published or updated this item on 03/08/2026.

Ulysses Sequence Parallelism: Training with Million-Token Contexts

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Ulysses Sequence Parallelism: Training with Million-Token Contexts matters because it signals momentum in training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: training.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

New ways to learn math and science in ChatGPT

New ways to learn math and science in ChatGPT OpenAI

New ways to learn math and science in ChatGPT matters because it signals momentum in gpt and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: gpt.

- Source context: OpenAI Research published or updated this item on 03/10/2026.

An AI agent hacked McKinsey's internal AI platform in two hours using a decades-old technique

An AI agent hacked McKinsey's internal AI platform in two hours using a decades-old technique The Decoder

An AI agent hacked McKinsey's internal AI platform in two hours using a decades-old technique matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: The Decoder published or updated this item on 03/11/2026.

Deloitte: Why Business Agility is Central to AI Adoption

Deloitte: Why Business Agility is Central to AI Adoption AI Magazine

Deloitte: Why Business Agility is Central to AI Adoption matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/16/2026.

Where OpenAI’s technology could show up in Iran

Where OpenAI’s technology could show up in Iran MIT Technology Review

Where OpenAI’s technology could show up in Iran matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/16/2026.

AI 101: OpenClaw Explained + lightweight alternatives

AI 101: OpenClaw Explained + lightweight alternatives Turing Post

AI 101: OpenClaw Explained + lightweight alternatives matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Turing Post published or updated this item on 02/19/2026.

Anthropic Education Report: The AI Fluency Index

Anthropic Education Report: The AI Fluency Index Anthropic

Anthropic Education Report: The AI Fluency Index matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

AI Drug Discovery: How Roche Accelerates Health Innovation

AI Drug Discovery: How Roche Accelerates Health Innovation AI Magazine

AI Drug Discovery: How Roche Accelerates Health Innovation matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 02/26/2026.

Freeport-McMoRan Uses AI to Transform Mining Operations

Freeport-McMoRan Uses AI to Transform Mining Operations AI Magazine

Freeport-McMoRan Uses AI to Transform Mining Operations matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 02/26/2026.

AI is rewiring how the world’s best Go players think

AI is rewiring how the world’s best Go players think MIT Technology Review

AI is rewiring how the world’s best Go players think matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 02/27/2026.

Labor market impacts of AI: A new measure and early evidence

Labor market impacts of AI: A new measure and early evidence Anthropic

Labor market impacts of AI: A new measure and early evidence matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/05/2026.

Granite 4.0 1B Speech: Compact, Multilingual, and Built for the Edge

A Blog post by IBM Granite on Hugging Face

Granite 4.0 1B Speech: Compact, Multilingual, and Built for the Edge matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

LeRobot v0.5.0: Scaling Every Dimension

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

LeRobot v0.5.0: Scaling Every Dimension matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

OpenAI to acquire Promptfoo

OpenAI to acquire Promptfoo OpenAI

OpenAI to acquire Promptfoo matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/09/2026.

How Pokémon Go is giving delivery robots an inch-perfect view of the world

How Pokémon Go is giving delivery robots an inch-perfect view of the world MIT Technology Review

How Pokémon Go is giving delivery robots an inch-perfect view of the world matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/10/2026.

Introducing Storage Buckets on the Hugging Face Hub

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Introducing Storage Buckets on the Hugging Face Hub matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

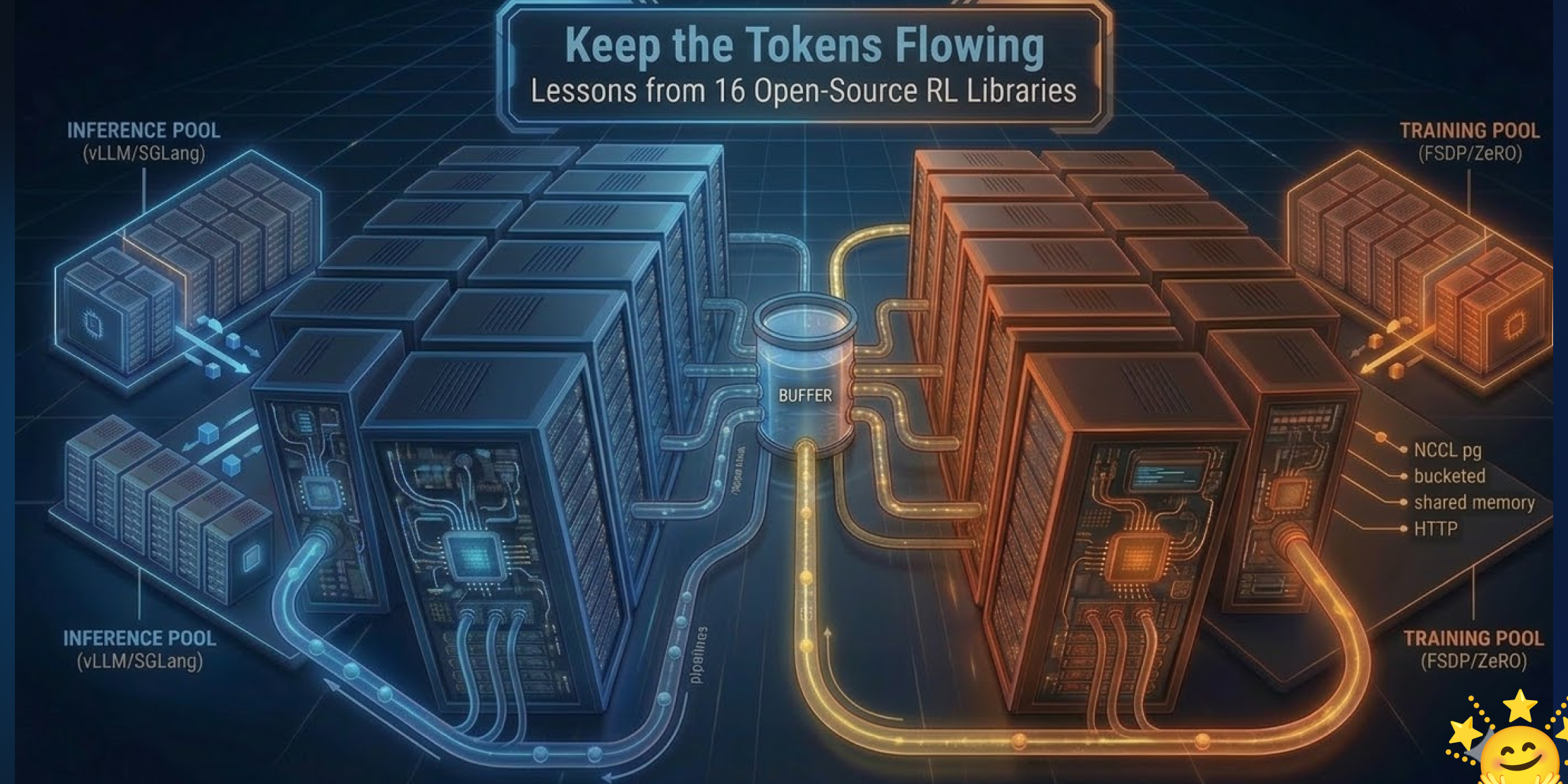

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

Startup claims first full brain emulation of a fruit fly in a simulated body

Startup claims first full brain emulation of a fruit fly in a simulated body The Decoder

Startup claims first full brain emulation of a fruit fly in a simulated body matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: The Decoder published or updated this item on 03/10/2026.

E.SUN Bank and IBM build AI governance framework for banking

E.SUN Bank is working with IBM to build clearer AI governance rules for how artificial intelligence can be used inside a bank. The effort reflects a wider shift in finance. Many firms already use AI for fraud checks and credit scoring, and some also use it to handle customer...

E.SUN Bank and IBM build AI governance framework for banking matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/13/2026.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says MIT Technology Review

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: MIT Tech Review AI published or updated this item on 03/17/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

BMW puts humanoid robots to work in Germany–and Europe’s factories are watching

Europe’s factory floors have a new kind of colleague. BMW Group has deployed humanoid robots in manufacturing in Germany for the first time, launching a pilot project at its Leipzig plant with AEON–a wheeled humanoid built by Hexagon Robotics. It is the first automotive...

BMW puts humanoid robots to work in Germany–and Europe’s factories are watching matters because it affects the policy, supply-chain, or security constraints around AI development, especially across europe, robotics.

- Primary signals: europe, robotics.

- Source context: AI News published or updated this item on 03/13/2026.

China pushes OpenClaw "one-person companies" with millions in AI agent subsidies

China pushes OpenClaw "one-person companies" with millions in AI agent subsidies The Decoder

China pushes OpenClaw "one-person companies" with millions in AI agent subsidies matters because it affects the policy, supply-chain, or security constraints around AI development, especially across china, agent.

- Primary signals: china, agent.

- Source context: The Decoder published or updated this item on 03/14/2026.

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence The Decoder

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence matters because it affects the policy, supply-chain, or security constraints around AI development, especially across chip.

- Primary signals: chip.

- Source context: The Decoder published or updated this item on 03/16/2026.

US Treasury publishes AI risk Guidebook for financial institutions

The US Treasury has published several documents designed for the US financial services sector that suggest a structured approach to managing AI risks in operations and policy (see subheading ‘Resources and Downloads’ towards the bottom of the link). The CRI Financial Services...

US Treasury publishes AI risk Guidebook for financial institutions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across policy.

- Primary signals: policy.

- Source context: AI News published or updated this item on 03/16/2026.

Why Codex Security Doesn’t Include a SAST Report

Why Codex Security Doesn’t Include a SAST Report OpenAI

Why Codex Security Doesn’t Include a SAST Report matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security.

- Primary signals: security.

- Source context: OpenAI Research published or updated this item on 03/16/2026.

MiroThinker-1.7 & H1: Towards Heavy-Duty Research Agents via Verification

TL;DR: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local...

MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for more reliable multi-step problem...

MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for more reliable...

We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification at local and global levels for...

- Method signal: We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

- Evidence to watch: In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: MiroThinker-1.7 and MiroThinker-H1 are research agents that enhance complex reasoning through structured planning, contextual reasoning, and tool interaction, with MiroThinker-H1 incorporating verification...

- Approach: We present MiroThinker-1.7, a new research agent designed for complex long-horizon reasoning tasks .

- Result signal: In particular, MiroThinker-1.7 improves the reliability of each interaction step through an agentic mid-training stage that emphasizes structured planning , contextual reasoning , and tool interaction .

- Community traction: Hugging Face Papers shows 53 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

InCoder-32B: Code Foundation Model for Industrial Scenarios

TL;DR: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks. Recent code large language models have achieved remarkable progress on general...

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded systems, compiler optimization, and 3D modeling.

InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Method signal: To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded systems, compiler optimization, and...

- Evidence to watch: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Approach: To address these challenges, we introduce InCoder-32B (Industrial-Coder-32B), the first 32B-parameter code foundation model unifying code intelligence across chip design, GPU kernel optimization, embedded...

- Result signal: InCoder-32B is a 32-billion-parameter code model trained on industrial datasets with extended context length and execution verification to improve performance in hardware-aware programming tasks.

- Community traction: Hugging Face Papers shows 74 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Demystifing Video Reasoning

TL;DR: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within...

Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer layers. Recent advances in video...

In this work, we challenge this assumption and uncover a fundamentally different mechanism.

Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with different random seeds.

Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer layers.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: In this work, we challenge this assumption and uncover a fundamentally different mechanism.

- Method signal: Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with different random seeds.

- Evidence to watch: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and perception-before-action within specialized transformer...

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: In this work, we challenge this assumption and uncover a fundamentally different mechanism.

- Approach: Motivated by these insights, we present a simple training-free strategy as a proof-of-concept, demonstrating how reasoning can be improved by ensembling latent trajectories from identical models with...

- Result signal: Diffusion-based video models demonstrate reasoning capabilities through denoising steps rather than frame sequences, exhibiting behaviors like working memory, self-correction, and...

- Community traction: Hugging Face Papers shows 47 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

WorldCam: Interactive Autoregressive 3D Gaming Worlds with Camera Pose as a Unifying Geometric Representation

TL;DR: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric...

Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding. Recent advances in video diffusion transformers...

Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding.

In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and geometric grounding.

- Method signal: In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

- Evidence to watch: Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Video diffusion transformers enhanced with camera pose representation enable precise action control and long-term 3D consistency in interactive gaming environments through physics-based action spaces and...

- Approach: In this paper, we establish camera pose as a unifying geometric representation to jointly ground immediate action control and long-term 3D consistency .

- Result signal: Extensive experiments show that our approach substantially outperforms state-of-the-art interactive gaming world models in action control lability, long-horizon visual quality, and 3D spatial consistency.

- Community traction: Hugging Face Papers shows 36 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Thinking in Uncertainty: Mitigating Hallucinations in MLRMs with Latent Entropy-Aware Decoding

TL;DR: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering. However, we observe that transition words (e.g., because, however, and wait) are closely associated with hallucinations and tend to exhibit...

With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

- Method signal: Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

- Evidence to watch: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: With this goal, we present Latent Entropy-Aware Decoding (LEAD), an efficient plug-and-play decoding strategy that leverages semantic context to achieve reliable reasoning.

- Approach: Inspired by superposed representation theory, we propose leveraging latent superposed reasoning to integrate multiple candidate semantics and maintain latent reasoning trajectories.

- Result signal: Recent advancements in multimodal large reasoning models (MLRMs) have significantly improved performance in visual question answering.

- Community traction: Hugging Face Papers shows 34 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Issue routing and exits.

The daily edition stays aligned with the rest of the site while keeping the full issue readable end to end.

Navigation

Public desks

Issue

- 03/18/2026

- 55 total analyzed

- Readable issue route