Daily Edition

The expanded edition keeps the full analyst notes, paper breakdowns, geopolitical framing, and the complete feed selected into this run.

Topic of the day.

A dedicated daily topic chosen from the strongest signals in the run, with TL;DR, why-now framing, and a fuller analyst read.

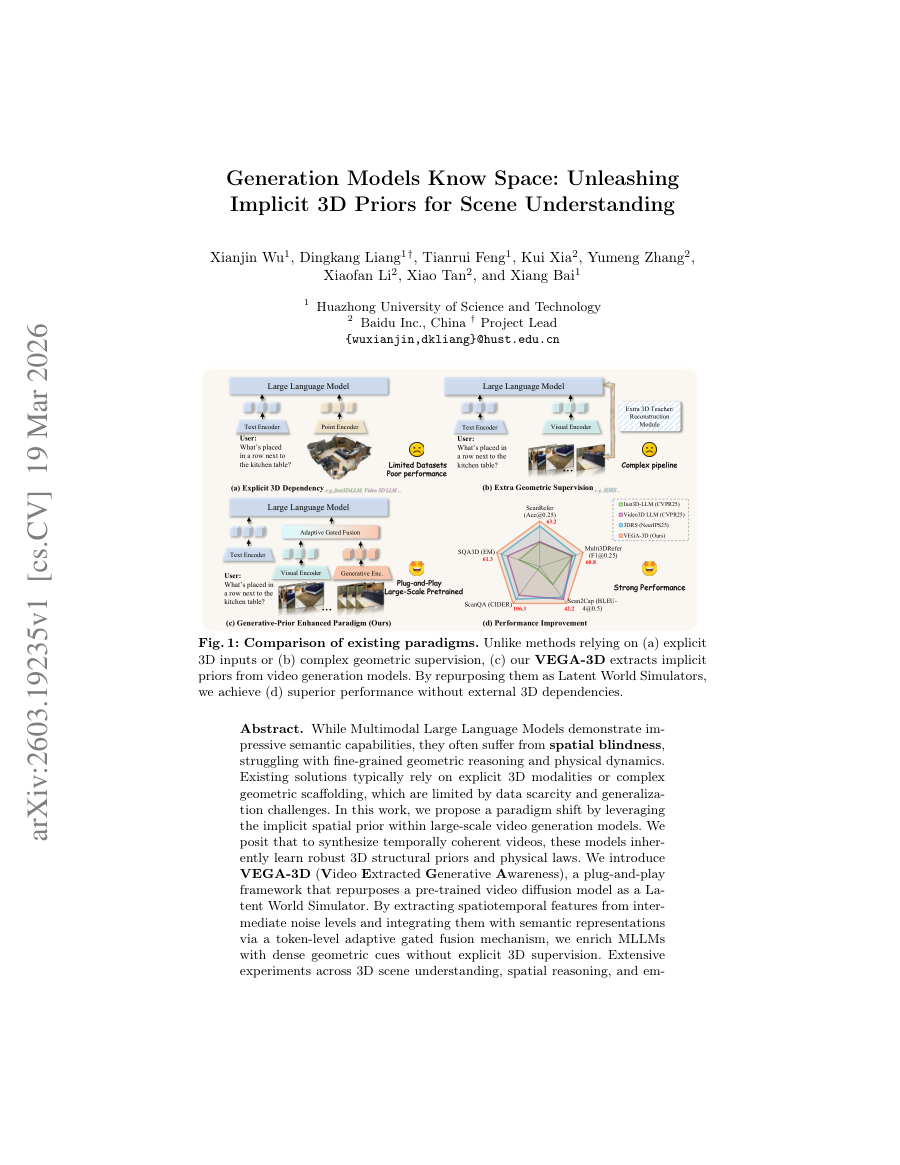

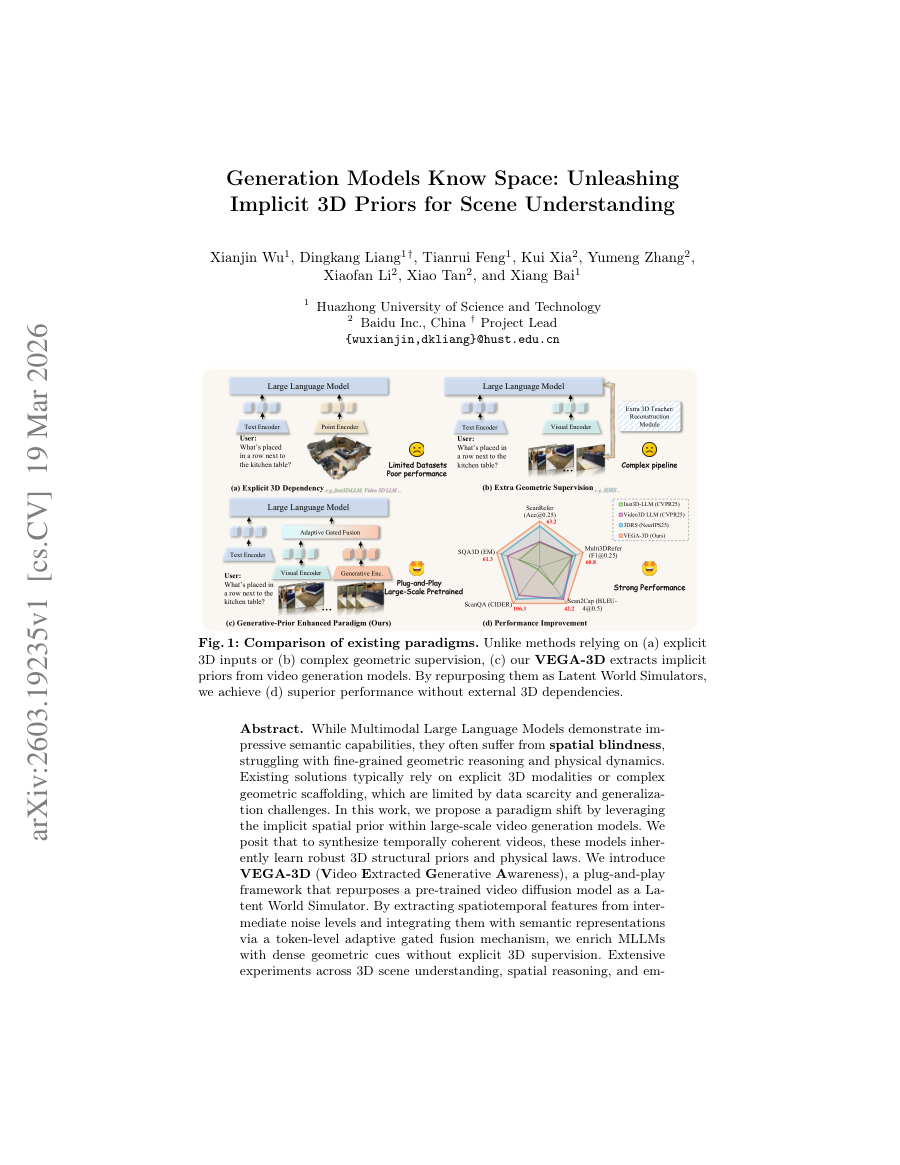

Implicit 3D Priors from Video Diffusion Models for Enhanced Scene Understanding

TL;DR: Video diffusion models implicitly learn 3D structure and physics; repurposing them as latent world simulators equips MLLMs with rich geometric cues without explicit 3D supervision.

Why now: Two recent arXiv papers (Mar 19, 2026) demonstrate that video generative priors can be extracted and fused with language models to close the spatial‑reasoning gap in MLLMs, a timely advance as embodied AI and robotics demand richer world models.

VEGA‑3D shows that spatiotemporal features from intermediate diffusion noise levels, when fused via token‑level gated mechanisms, significantly improve 3D scene understanding and manipulation benchmarks. FASTER complements this by proving that the same generative priors can be harnessed for low‑latency action generation, indicating a unified pathway from perception to action. Together they suggest that scaling video diffusion models offers a data‑efficient route to inject physical world knowledge into foundation models.

- Video diffusion models learn implicit 3D structural priors and physical laws necessary for temporal coherence.

- VEGA‑3D extracts spatiotemporal features at multiple noise levels and integrates them with semantic tokens via adaptive gated fusion.

- FASTER introduces a Horizon‑Aware Schedule that compresses immediate‑action denoising, cutting reaction latency tenfold while preserving long‑horizon trajectory quality.

- Both approaches require no additional 3D‑specific training data, leveraging existing large‑scale video generative checkpoints.

- Generation Models Know Space: Unleashing Implicit 3D Priors for Scene Understanding (Hugging Face Papers / arXiv | 03/19/2026)

- FASTER: Rethinking Real-Time Flow VLAs (Hugging Face Papers / arXiv | 03/19/2026)

Policy, chips, capital, and power.

Industrial strategy, compute supply, export controls, and big-company positioning shaping the AI balance of power.

Where OpenAI’s technology could show up in Iran

Analysts speculate on potential channels through which OpenAI’s models or derived technologies could reach Iranian entities despite existing sanctions, highlighting the challenges of enforcing AI export controls.

The diffusion of advanced generative AI could accelerate disinformation, cyber‑offensive capabilities, or indigenous AI development in sanctioned states, complicating non

- Examines possible routes via third‑party cloud providers, open‑weight model releases, and academic collaborations.

- Highlights the difficulty of monitoring model fine‑tuning and derivative work across jurisdictional boundaries.

- Suggests tightening of compute‑export licensing and heightened scrutiny of API usage patterns as mitigation.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence the-decoder.com

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence matters because it affects the policy, supply-chain, or security constraints around AI development, especially across chip.

- Primary signals: chip.

- Source context: The Decoder published or updated this item on 03/16/2026.

Product, model, and platform movement.

Software, model, deployment, and competitive stories with the strongest operator and market signal in this edition.

Introducing GPT-5.4 mini and nano

OpenAI releases two compact variants of GPT‑5.4—mini and nano—targeting latency‑sensitive and resource‑constrained environments while retaining strong language capabilities.

Provides a tiered model lineup that enables deployment across cloud, edge, and device spectra, addressing the growing demand for efficient AI in real‑world applications.

- Mini variant (~1.3 B parameters) achieves ~90 % of GPT‑5.4 performance on MMLU with 4× lower latency.

- Nano variant (~200 M parameters) targets sub‑10 ms response times for chat and code‑completion use cases.

- Both models inherit the same tokenizer and alignment data, simplifying downstream integration.

Visa prepares payment systems for AI agent-initiated transactions

Visa is piloting systems that allow autonomous AI agents to initiate payment transactions, shifting the traditional human‑in‑the‑loop model toward agent‑driven commerce.

If successful, AI‑initiated payments could unlock new automation scenarios in B2B, subscription, and IoT commerce, while raising fresh considerations for fraud detection and regulatory compliance.

- Utilizes token‑based authentication and scoped API permissions to limit agent transaction capabilities.

- Integrates real‑time risk scoring models that evaluate agent behavior and contextual signals.

- Plans sandbox environments for agent‑to‑agent settlement testing before live rollout.

Multiply raises $9.5m for self-learning ads, reports 300%-500% pipeline increase for B2B companies

Multiply secures $9.5 M to expand its self‑learning advertisement platform, which leverages generative AI to optimize ad creatives and reports a 3‑5× increase in sales pipeline for B2B clients.

Demonstrates commercial traction of generative AI in marketing, highlighting revenue‑generating use cases that can drive further investment in foundation models.

- Uses fine‑tuned text‑to‑image models to generate brand‑safe ad variants at scale.

- Employs reinforcement learning to optimize click‑through and conversion signals in real time.

- Reports average 3.5× lift in qualified leads across pilot B2B campaigns.

NVIDIA wants enterprise AI agents safer to deploy

The NVIDIA Agent Toolkit is Jensen Huang’s answer to the question enterprises keep asking: how do we put AI agents to work without losing control of our data and our liability? Announced at GTC 2026 in San Jose on March 16, the NVIDIA Agent Toolkit is an open-source software...

NVIDIA wants enterprise AI agents safer to deploy matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/19/2026.

**Introducing SPEED-Bench: A Unified and Diverse Benchmark for Speculative Decoding**

A Blog post by NVIDIA on Hugging Face

**Introducing SPEED-Bench: A Unified and Diverse Benchmark for Speculative Decoding** matters because it signals momentum in benchmark and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: benchmark.

- Source context: Hugging Face Blog published or updated this item on 03/19/2026.

Differentiated source coverage.

Stories drawn from research blogs, first-party lab posts, practitioner newsletters, and selected technical outlets so the edition does not mirror the same headline across every source.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

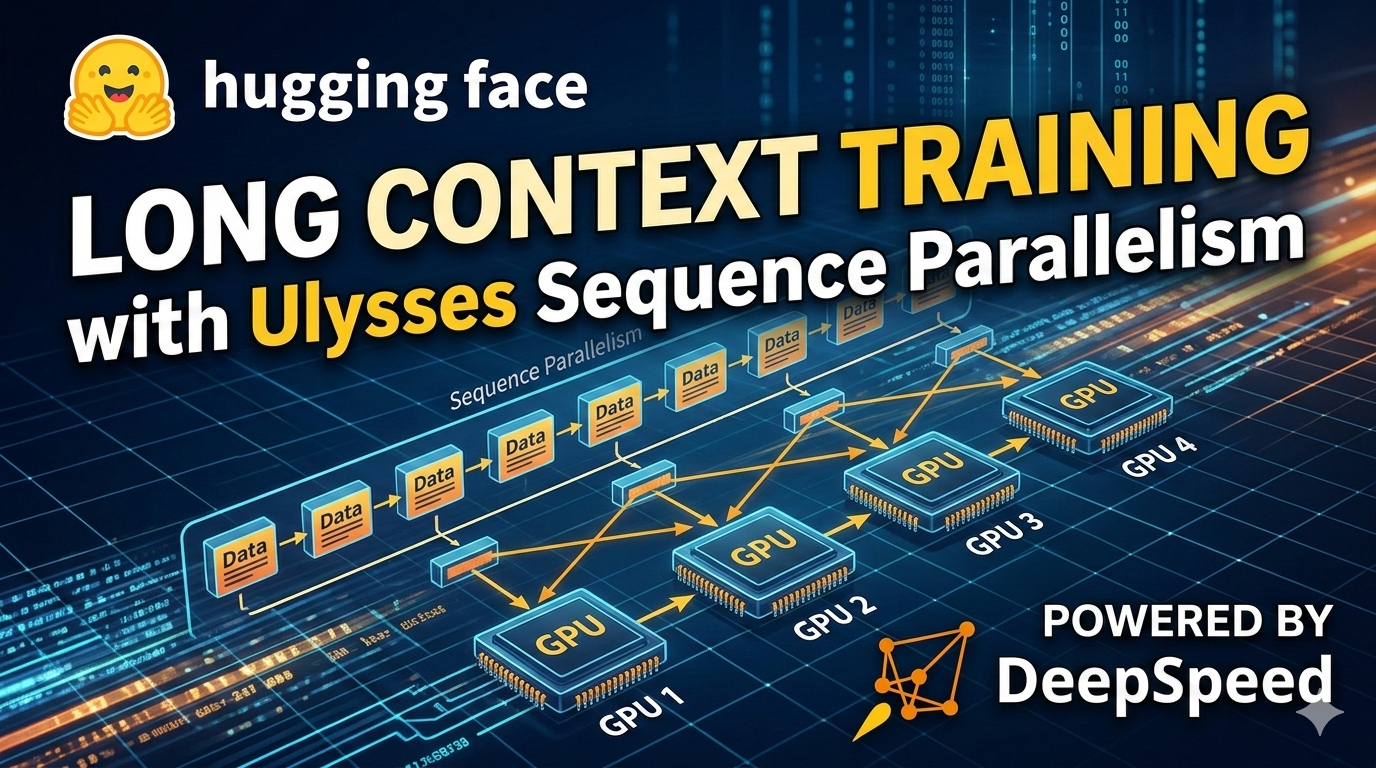

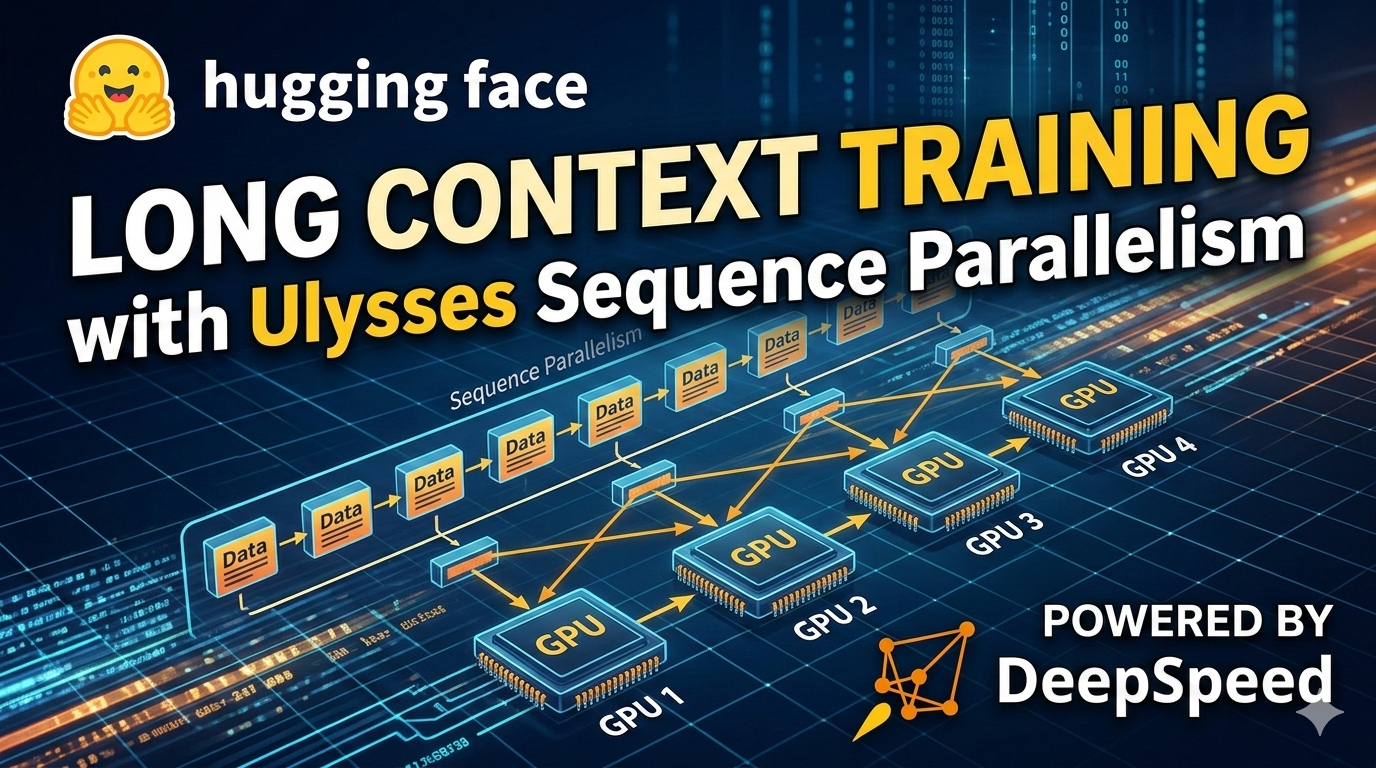

Ulysses Sequence Parallelism: Training with Million-Token Contexts

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Ulysses Sequence Parallelism: Training with Million-Token Contexts matters because it signals momentum in training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: training.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

OpenAI to acquire Astral

OpenAI to acquire Astral OpenAI

OpenAI to acquire Astral matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/19/2026.

Measuring AI agent autonomy in practice

Measuring AI agent autonomy in practice Anthropic

Measuring AI agent autonomy in practice matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Anthropic Research published or updated this item on 02/18/2026.

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning MarkTechPost

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning matters because it signals momentum in llm, reasoning and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, reasoning.

- Source context: MarkTechPost published or updated this item on 03/09/2026.

US Treasury publishes AI risk Guidebook for financial institutions

The U.S. Treasury releases a guidebook outlining a structured approach for banks and fintech firms to manage AI‑related operational, compliance, and systemic risks.

As AI

- Primary signals: financial regulation, risk management, policy.

- Source context: AI News published or updated this item on 03/16/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

How Pokémon Go is giving delivery robots an inch-perfect view of the world

How Pokémon Go is giving delivery robots an inch-perfect view of the world MIT Technology Review

How Pokémon Go is giving delivery robots an inch-perfect view of the world matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/10/2026.

Method, limitations, and results.

Paper summaries, methodology notes, limitations, and deep-dive bullets for the research items selected into the digest.

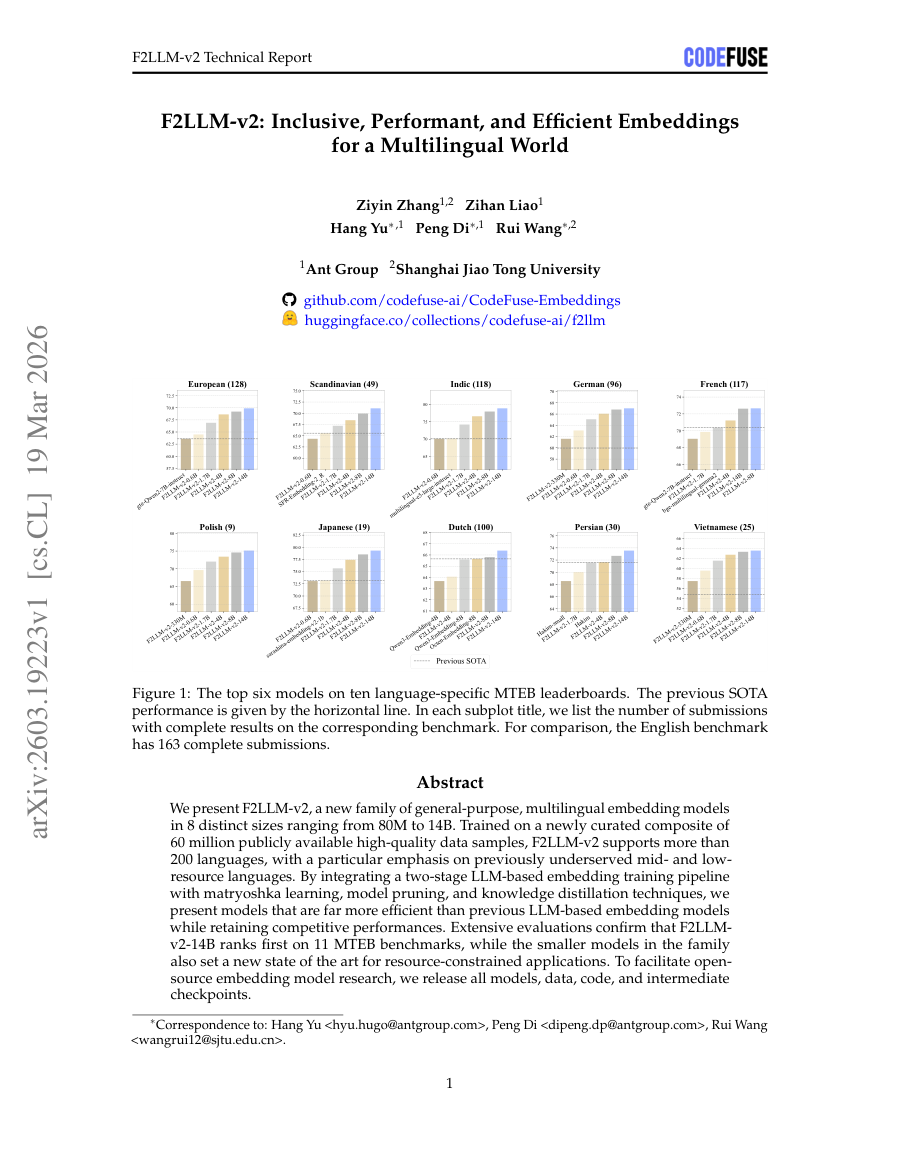

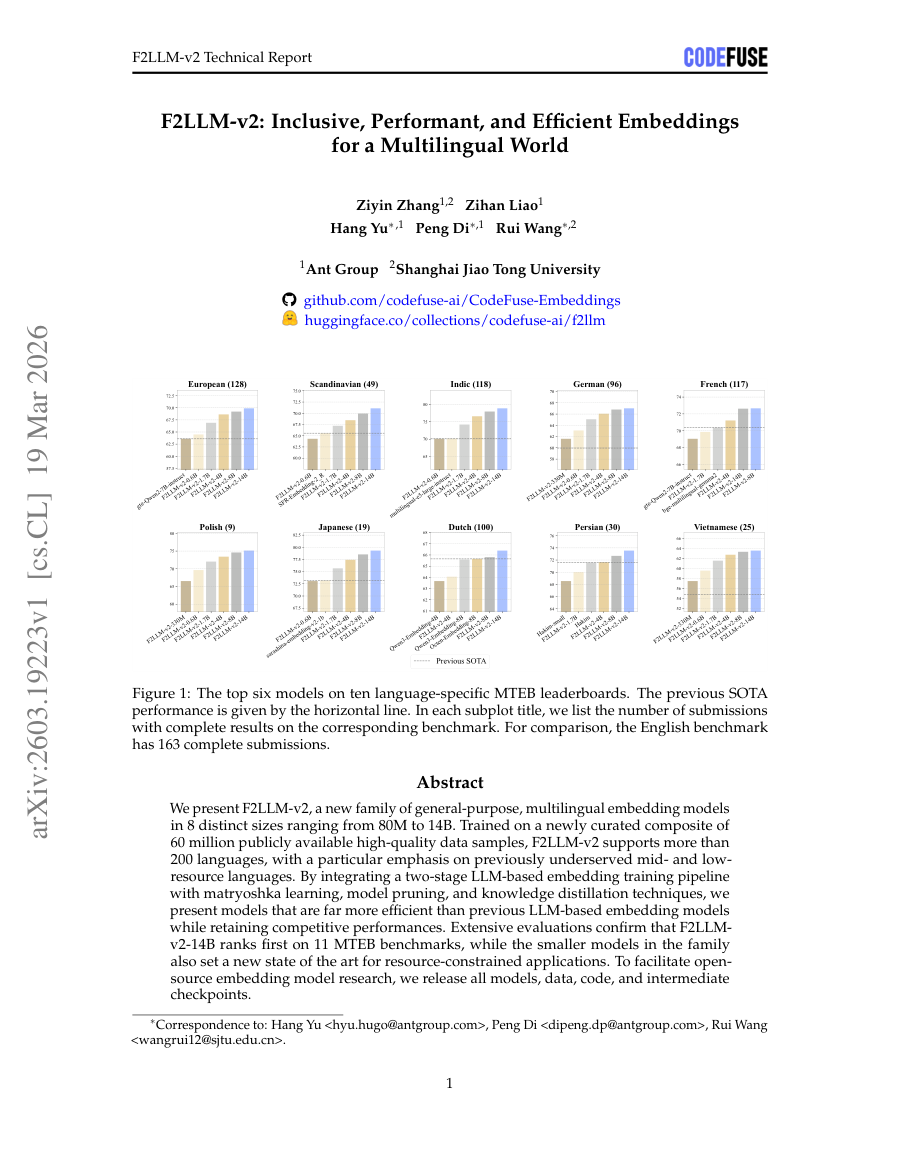

F2LLM-v2: Inclusive, Performant, and Efficient Embeddings for a Multilingual World

TL;DR: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques. We present F2LLM-v2, a new family of general-purpose,...

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

- Method signal: We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

- Evidence to watch: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and...

- Approach: We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

- Result signal: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and...

- Community traction: Hugging Face Papers shows 20 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Generation Models Know Space: Unleashing Implicit 3D Priors for Scene Understanding

TL;DR: A video diffusion model is repurposed as a latent world simulator to enhance multimodal large language models with implicit 3D structural priors and physical laws through spatiotemporal feature extraction and...

A video diffusion model is repurposed as a latent world simulator to enhance multimodal large language models with implicit 3D structural priors and physical laws through spatiotemporal feature extraction and semantic integration. While Multimodal Large Language Models...

Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

- Method signal: In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

- Evidence to watch: While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

- Approach: In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

- Result signal: While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

- Community traction: Hugging Face Papers shows 55 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

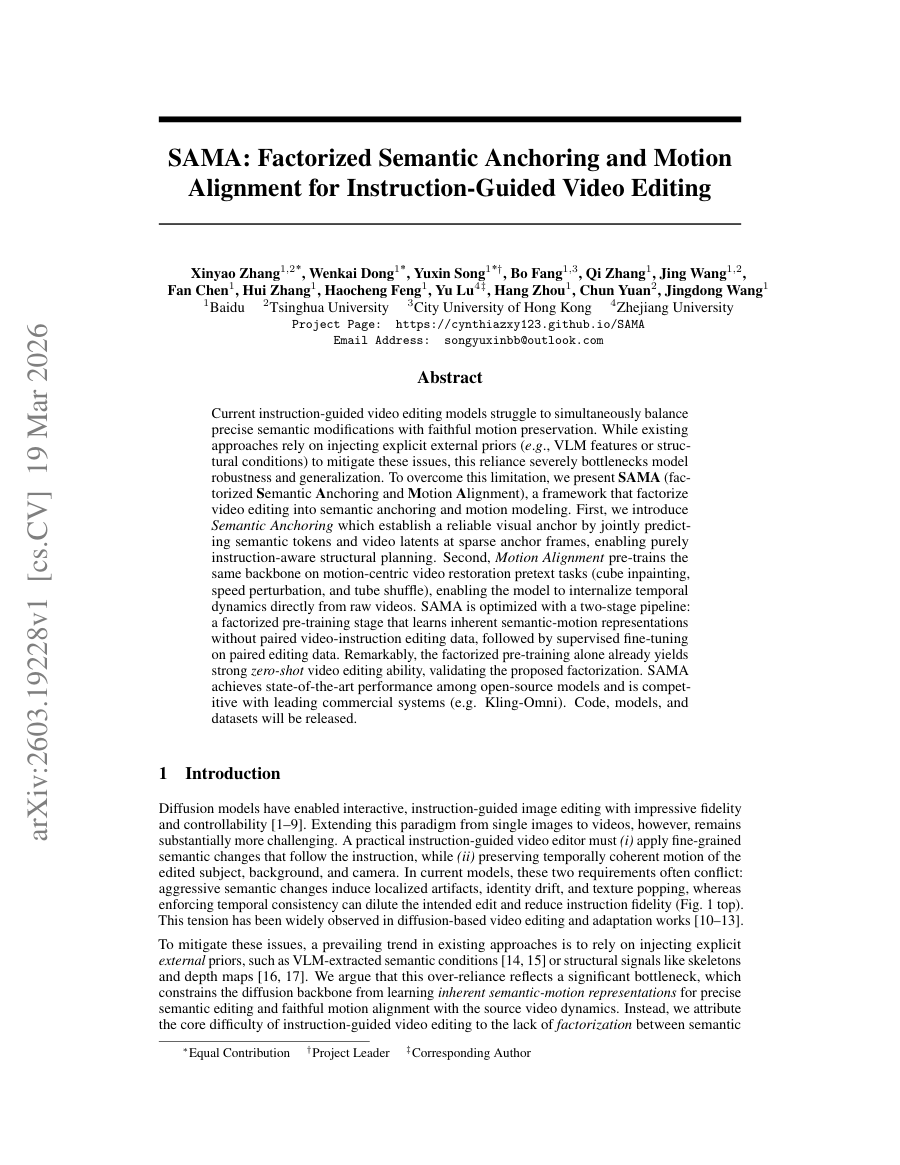

SAMA: Factorized Semantic Anchoring and Motion Alignment for Instruction-Guided Video Editing

TL;DR: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and...

SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame prediction. Current instruction-guided...

SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame prediction.

To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame...

- Method signal: To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

- Evidence to watch: SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration...

- Approach: To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

- Result signal: SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

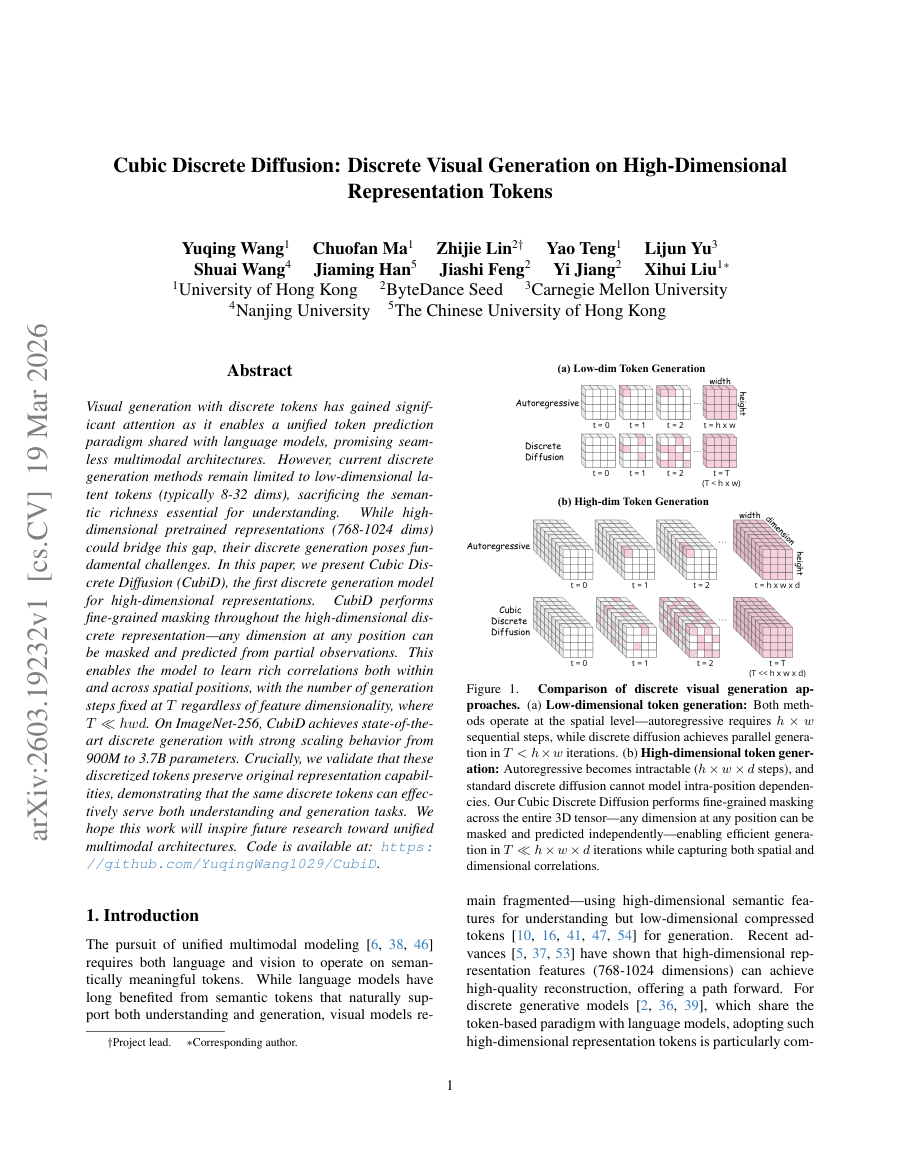

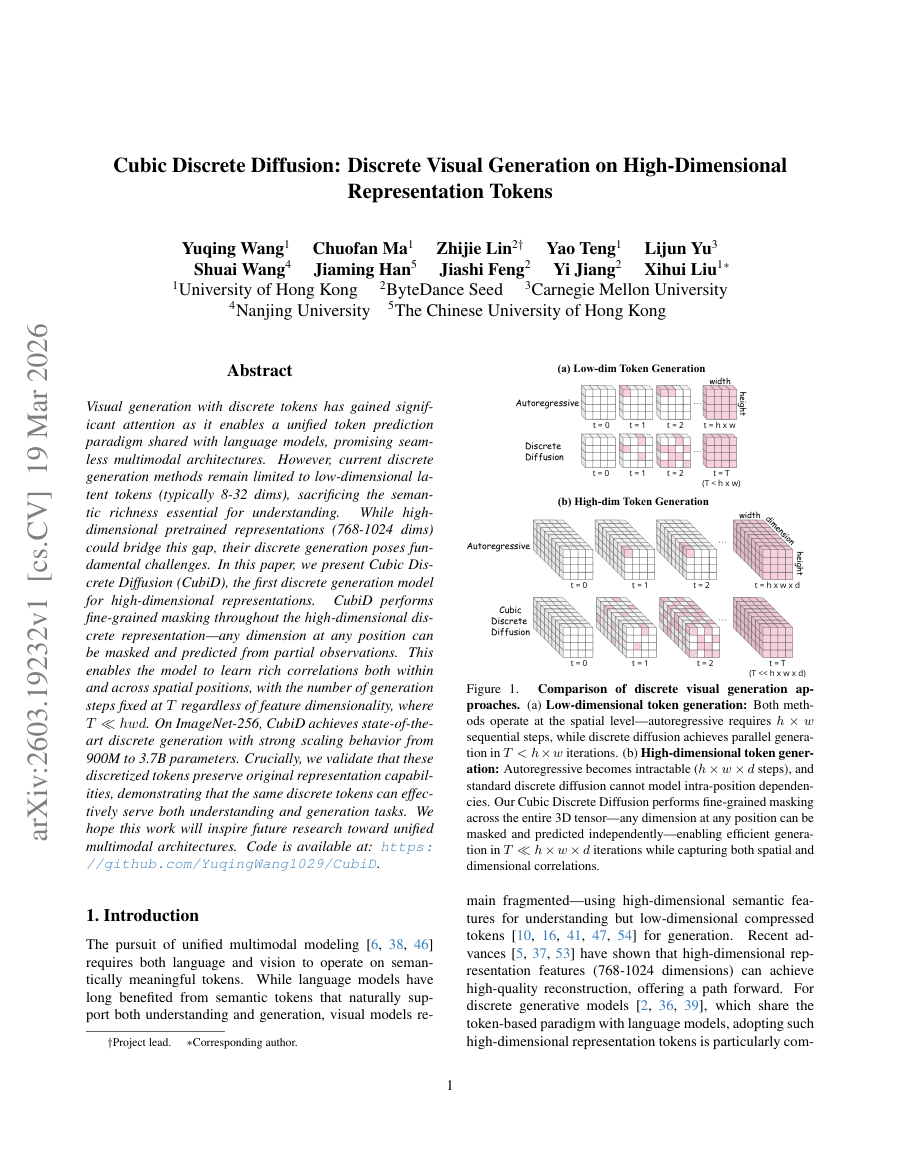

Cubic Discrete Diffusion: Discrete Visual Generation on High-Dimensional Representation Tokens

TL;DR: CubiD is a discrete generation model for high-dimensional representations that enables fine-grained masking and learns rich correlations across spatial positions while maintaining fixed generation steps regardless of...

CubiD is a discrete generation model for high-dimensional representations that enables fine-grained masking and learns rich correlations across spatial positions while maintaining fixed generation steps regardless of feature dimensionality. Visual generation with discrete...

While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

- Method signal: In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

- Evidence to watch: On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

- Approach: In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

- Result signal: On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

FASTER: Rethinking Real-Time Flow VLAs

TL;DR: Fast Action Sampling for ImmediaTE Reaction (FASTER) reduces real-time reaction latency in Vision-Language-Action models by adapting sampling schedules to prioritize immediate actions while maintaining long-horizon...

Fast Action Sampling for ImmediaTE Reaction (FASTER) reduces real-time reaction latency in Vision-Language-Action models by adapting sampling schedules to prioritize immediate actions while maintaining long-horizon trajectory quality. Real-time execution is crucial for...

Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction latency.

By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction latency.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction...

- Method signal: By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

- Evidence to watch: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction...

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start,...

- Approach: By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

- Result signal: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start,...

- Community traction: Hugging Face Papers shows 34 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Everything selected into the run.

The complete analyzed stream for the issue, useful when you want to scan the entire run instead of only the curated front page.

Introducing GPT-5.4 mini and nano

OpenAI releases two compact variants of GPT‑5.4—mini and nano—targeting latency‑sensitive and resource‑constrained environments while retaining strong language capabilities.

Provides a tiered model lineup that enables deployment across cloud, edge, and device spectra, addressing the growing demand for efficient AI in real‑world applications.

- Mini variant (~1.3 B parameters) achieves ~90 % of GPT‑5.4 performance on MMLU with 4× lower latency.

- Nano variant (~200 M parameters) targets sub‑10 ms response times for chat and code‑completion use cases.

- Both models inherit the same tokenizer and alignment data, simplifying downstream integration.

Visa prepares payment systems for AI agent-initiated transactions

Visa is piloting systems that allow autonomous AI agents to initiate payment transactions, shifting the traditional human‑in‑the‑loop model toward agent‑driven commerce.

If successful, AI‑initiated payments could unlock new automation scenarios in B2B, subscription, and IoT commerce, while raising fresh considerations for fraud detection and regulatory compliance.

- Utilizes token‑based authentication and scoped API permissions to limit agent transaction capabilities.

- Integrates real‑time risk scoring models that evaluate agent behavior and contextual signals.

- Plans sandbox environments for agent‑to‑agent settlement testing before live rollout.

Multiply raises $9.5m for self-learning ads, reports 300%-500% pipeline increase for B2B companies

Multiply secures $9.5 M to expand its self‑learning advertisement platform, which leverages generative AI to optimize ad creatives and reports a 3‑5× increase in sales pipeline for B2B clients.

Demonstrates commercial traction of generative AI in marketing, highlighting revenue‑generating use cases that can drive further investment in foundation models.

- Uses fine‑tuned text‑to‑image models to generate brand‑safe ad variants at scale.

- Employs reinforcement learning to optimize click‑through and conversion signals in real time.

- Reports average 3.5× lift in qualified leads across pilot B2B campaigns.

NVIDIA wants enterprise AI agents safer to deploy

The NVIDIA Agent Toolkit is Jensen Huang’s answer to the question enterprises keep asking: how do we put AI agents to work without losing control of our data and our liability? Announced at GTC 2026 in San Jose on March 16, the NVIDIA Agent Toolkit is an open-source software...

NVIDIA wants enterprise AI agents safer to deploy matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/19/2026.

**Introducing SPEED-Bench: A Unified and Diverse Benchmark for Speculative Decoding**

A Blog post by NVIDIA on Hugging Face

**Introducing SPEED-Bench: A Unified and Diverse Benchmark for Speculative Decoding** matters because it signals momentum in benchmark and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: benchmark.

- Source context: Hugging Face Blog published or updated this item on 03/19/2026.

Trustpilot partners with AI companies as traditional search declines

Trustpilot is reported to be pursuing partnerships with large eCommerce companies as AI-driven shopping gains traction. In an interview with Bloomberg News [paywall], chief executive Adrian Blair said that AI agents acting on behalf of consumers require lots of information...

Trustpilot partners with AI companies as traditional search declines matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/17/2026.

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning MarkTechPost

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning matters because it signals momentum in llm, reasoning and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, reasoning.

- Source context: MarkTechPost published or updated this item on 03/09/2026.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

7 Emerging Memory Architectures for AI Agents

7 Emerging Memory Architectures for AI Agents Turing Post

7 Emerging Memory Architectures for AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Turing Post published or updated this item on 03/15/2026.

NTT DATA and NVIDIA bring enterprise AI factories to production scale

NTT DATA has announced an initiative to deliver NVIDIA-powered platforms designed to give organisations a repeatable, production-ready model for scaling AI. The offering integrates NVIDIA’s GPU-accelerated computing and high-performance networking with NVIDIA AI Enterprise...

NTT DATA and NVIDIA bring enterprise AI factories to production scale matters because it signals momentum in agent, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, model.

- Source context: AI News published or updated this item on 03/16/2026.

OpenAI to acquire Astral

OpenAI to acquire Astral OpenAI

OpenAI to acquire Astral matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/19/2026.

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 Turing Post

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Turing Post published or updated this item on 03/17/2026.

Measuring AI agent autonomy in practice

Measuring AI agent autonomy in practice Anthropic

Measuring AI agent autonomy in practice matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Anthropic Research published or updated this item on 02/18/2026.

An update on our model deprecation commitments for Claude Opus 3

An update on our model deprecation commitments for Claude Opus 3 Anthropic

An update on our model deprecation commitments for Claude Opus 3 matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/25/2026.

Introducing GPT-5.4

Introducing GPT-5.4 OpenAI

Introducing GPT-5.4 matters because it signals momentum in gpt and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: gpt.

- Source context: OpenAI Research published or updated this item on 03/05/2026.

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship Turing Post

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Turing Post published or updated this item on 03/08/2026.

Ulysses Sequence Parallelism: Training with Million-Token Contexts

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Ulysses Sequence Parallelism: Training with Million-Token Contexts matters because it signals momentum in training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: training.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

New ways to learn math and science in ChatGPT

New ways to learn math and science in ChatGPT OpenAI

New ways to learn math and science in ChatGPT matters because it signals momentum in gpt and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: gpt.

- Source context: OpenAI Research published or updated this item on 03/10/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces the-decoder.com

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: The Decoder published or updated this item on 03/18/2026.

Goldman Sachs sees AI investment shift to data centres

Artificial intelligence investment is entering a more selective phase as companies and investors look beyond early excitement and focus on the data centre infrastructure required to run AI systems. Recent analysis from Goldman Sachs suggests the market is moving toward what...

Goldman Sachs sees AI investment shift to data centres matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/17/2026.

AI 101: OpenClaw Explained + lightweight alternatives

AI 101: OpenClaw Explained + lightweight alternatives Turing Post

AI 101: OpenClaw Explained + lightweight alternatives matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Turing Post published or updated this item on 02/19/2026.

Anthropic Education Report: The AI Fluency Index

Anthropic Education Report: The AI Fluency Index Anthropic

Anthropic Education Report: The AI Fluency Index matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

Labor market impacts of AI: A new measure and early evidence

Labor market impacts of AI: A new measure and early evidence Anthropic

Labor market impacts of AI: A new measure and early evidence matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/05/2026.

Granite 4.0 1B Speech: Compact, Multilingual, and Built for the Edge

A Blog post by IBM Granite on Hugging Face

Granite 4.0 1B Speech: Compact, Multilingual, and Built for the Edge matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

LeRobot v0.5.0: Scaling Every Dimension

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

LeRobot v0.5.0: Scaling Every Dimension matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

How Pokémon Go is giving delivery robots an inch-perfect view of the world

How Pokémon Go is giving delivery robots an inch-perfect view of the world MIT Technology Review

How Pokémon Go is giving delivery robots an inch-perfect view of the world matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/10/2026.

Introducing Storage Buckets on the Hugging Face Hub

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Introducing Storage Buckets on the Hugging Face Hub matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

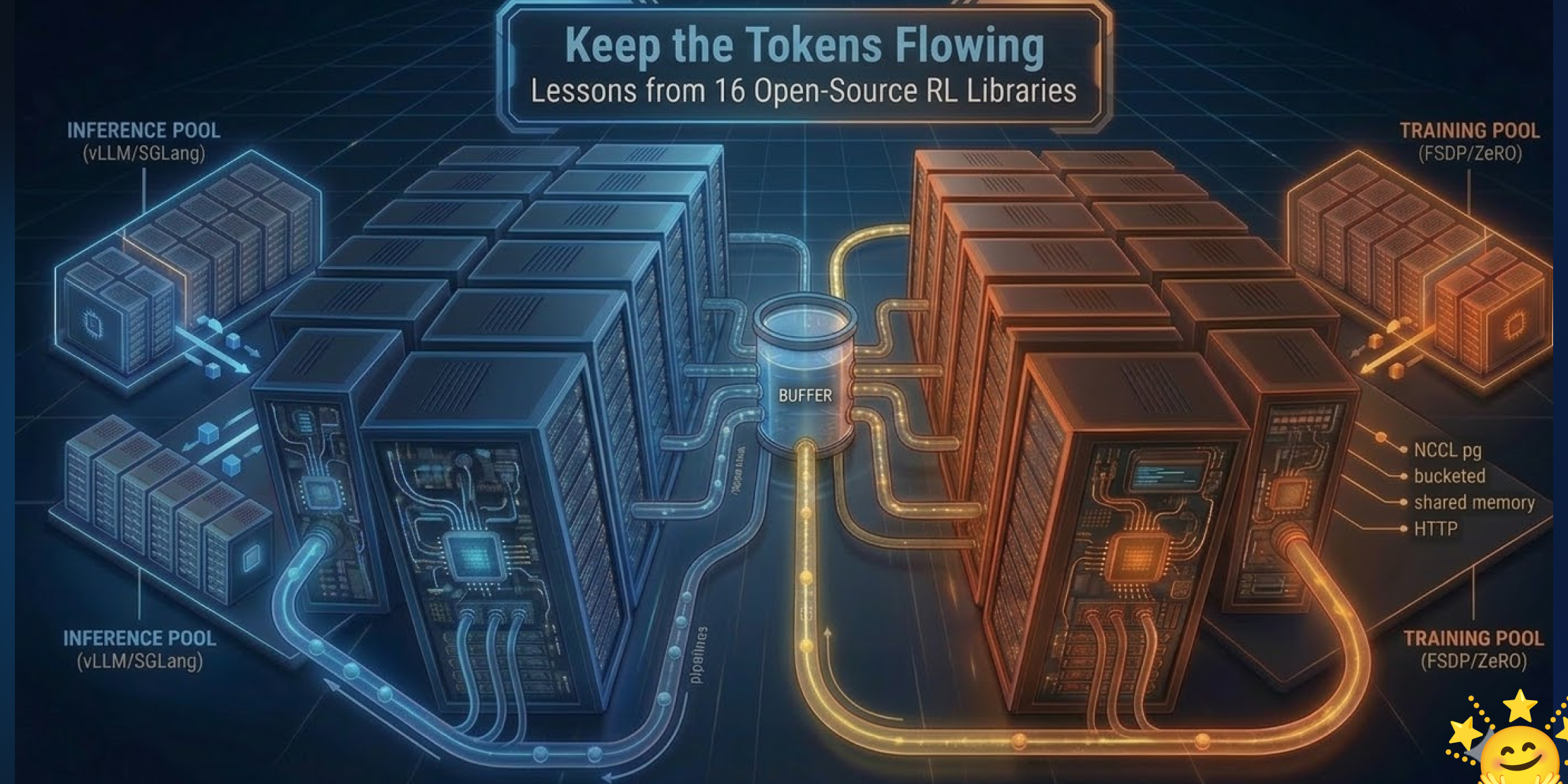

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

Why physical AI is becoming manufacturing’s next advantage

Why physical AI is becoming manufacturing’s next advantage MIT Technology Review

Why physical AI is becoming manufacturing’s next advantage matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/13/2026.

Garry Tan Releases gstack: An Open-Source Claude Code System for Planning, Code Review, QA, and Shipping

Garry Tan Releases gstack: An Open-Source Claude Code System for Planning, Code Review, QA, and Shipping MarkTechPost

Garry Tan Releases gstack: An Open-Source Claude Code System for Planning, Code Review, QA, and Shipping matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MarkTechPost published or updated this item on 03/14/2026.

Deloitte: Why Business Agility is Central to AI Adoption

Deloitte: Why Business Agility is Central to AI Adoption AI Magazine

Deloitte: Why Business Agility is Central to AI Adoption matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/16/2026.

Where OpenAI’s technology could show up in Iran

Analysts speculate on potential channels through which OpenAI’s models or derived technologies could reach Iranian entities despite existing sanctions, highlighting the challenges of enforcing AI export controls.

The diffusion of advanced generative AI could accelerate disinformation, cyber‑offensive capabilities, or indigenous AI development in sanctioned states, complicating non

- Examines possible routes via third‑party cloud providers, open‑weight model releases, and academic collaborations.

- Highlights the difficulty of monitoring model fine‑tuning and derivative work across jurisdictional boundaries.

- Suggests tightening of compute‑export licensing and heightened scrutiny of API usage patterns as mitigation.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence the-decoder.com

Hua Hong becomes the second Chinese chipmaker to crack 7nm manufacturing as Beijing pushes for AI independence matters because it affects the policy, supply-chain, or security constraints around AI development, especially across chip.

- Primary signals: chip.

- Source context: The Decoder published or updated this item on 03/16/2026.

US Treasury publishes AI risk Guidebook for financial institutions

The U.S. Treasury releases a guidebook outlining a structured approach for banks and fintech firms to manage AI‑related operational, compliance, and systemic risks.

As AI

- Primary signals: financial regulation, risk management, policy.

- Source context: AI News published or updated this item on 03/16/2026.

F2LLM-v2: Inclusive, Performant, and Efficient Embeddings for a Multilingual World

TL;DR: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques. We present F2LLM-v2, a new family of general-purpose,...

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

- Method signal: We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

- Evidence to watch: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and distillation techniques.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and...

- Approach: We present F2LLM-v2, a new family of general-purpose, multilingual embedding models in 8 distinct sizes ranging from 80M to 14B.

- Result signal: F2LLM-v2 is a multilingual embedding model family trained on 60 million samples across 200+ languages, achieving superior performance through LLM-based training, matryoshka learning, pruning, and...

- Community traction: Hugging Face Papers shows 20 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Generation Models Know Space: Unleashing Implicit 3D Priors for Scene Understanding

TL;DR: A video diffusion model is repurposed as a latent world simulator to enhance multimodal large language models with implicit 3D structural priors and physical laws through spatiotemporal feature extraction and...

A video diffusion model is repurposed as a latent world simulator to enhance multimodal large language models with implicit 3D structural priors and physical laws through spatiotemporal feature extraction and semantic integration. While Multimodal Large Language Models...

Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

- Method signal: In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

- Evidence to watch: While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Existing solutions typically rely on explicit 3D modalities or complex geometric scaffolding, which are limited by data scarcity and generalization challenges.

- Approach: In this work, we propose a paradigm shift by leveraging the implicit spatial prior within large-scale video generation models.

- Result signal: While Multimodal Large Language Models demonstrate impressive semantic capabilities, they often suffer from spatial blindness , struggling with fine-grained geometric reasoning and physical dynamics.

- Community traction: Hugging Face Papers shows 55 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

SAMA: Factorized Semantic Anchoring and Motion Alignment for Instruction-Guided Video Editing

TL;DR: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and...

SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame prediction. Current instruction-guided...

SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame prediction.

To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration tasks and sparse anchor frame...

- Method signal: To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

- Evidence to watch: SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: SAMA presents a factorized approach to video editing that separates semantic anchoring from motion modeling, enabling instruction-guided edits with preserved motion through pre-trained motion restoration...

- Approach: To overcome this limitation, we present SAMA (factorized Semantic Anchoring and Motion Alignment ), a framework that factorizes video editing into semantic anchoring and motion modeling.

- Result signal: SAMA achieves state-of-the-art performance among open-source models and is competitive with leading commercial systems (e.g., Kling-Omni).

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Cubic Discrete Diffusion: Discrete Visual Generation on High-Dimensional Representation Tokens

TL;DR: CubiD is a discrete generation model for high-dimensional representations that enables fine-grained masking and learns rich correlations across spatial positions while maintaining fixed generation steps regardless of...

CubiD is a discrete generation model for high-dimensional representations that enables fine-grained masking and learns rich correlations across spatial positions while maintaining fixed generation steps regardless of feature dimensionality. Visual generation with discrete...

While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

- Method signal: In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

- Evidence to watch: On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: While high-dimensional pretrained representations (768-1024 dims) could bridge this gap, their discrete generation poses fundamental challenges.

- Approach: In this paper, we present Cubic Discrete Diffusion (CubiD), the first discrete generation model for high-dimensional representations .

- Result signal: On ImageNet-256, CubiD achieves state-of-the-art discrete generation with strong scaling behavior from 900M to 3.7B parameters.

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

FASTER: Rethinking Real-Time Flow VLAs

TL;DR: Fast Action Sampling for ImmediaTE Reaction (FASTER) reduces real-time reaction latency in Vision-Language-Action models by adapting sampling schedules to prioritize immediate actions while maintaining long-horizon...

Fast Action Sampling for ImmediaTE Reaction (FASTER) reduces real-time reaction latency in Vision-Language-Action models by adapting sampling schedules to prioritize immediate actions while maintaining long-horizon trajectory quality. Real-time execution is crucial for...

Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction latency.

By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction latency.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction...

- Method signal: By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

- Evidence to watch: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start, forming the bottleneck in reaction...

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start,...

- Approach: By rethinking the notion of reaction in action chunking policies , this paper presents a systematic analysis of the factors governing reaction time .

- Result signal: Moreover, we reveal that the standard practice of applying a constant schedule in flow-based VLA s can be inefficient and forces the system to complete all sampling steps before any movement can start,...

- Community traction: Hugging Face Papers shows 34 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Issue routing and exits.

The daily edition stays aligned with the rest of the site while keeping the full issue readable end to end.

Navigation

Public desks

Issue

- 03/19/2026

- 43 total analyzed

- Readable issue route