Daily Edition

The expanded edition keeps the full analyst notes, paper breakdowns, geopolitical framing, and the complete feed selected into this run.

Topic of the day.

A dedicated daily topic chosen from the strongest signals in the run, with TL;DR, why-now framing, and a fuller analyst read.

HopChain: Multi-Hop Data Synthesis for Generalizable Vision-Language Reasoning

TL;DR: HopChain generates multi-hop vision-language reasoning data to improve VLM long-chain reasoning, boosting performance across 20/24 benchmarks.

Why now: Recent VLMs show strong multimodal abilities but struggle with fine-grained reasoning; HopChain addresses the lack of complex reasoning chains in existing RLVR data.

The framework creates logically dependent chains of instance-grounded hops, ensuring each step builds on the previous, with final answer as a verifiable number. Experiments show adding HopChain data to RLVR improves generalization without targeting specific benchmarks, indicating broad gains. Ablation studies confirm full chains are critical, as removing them drops accuracy significantly.

- Scalable synthesis of multi-hop VL reasoning data

- Improves 20 out of 24 benchmarks on Qwen3.5 models

- Full chained queries essential; half/single variants reduce accuracy by 5.3–7.0 points

- Data is instance-grounded and reward‑verifiable

- HopChain: Multi-Hop Data Synthesis for Generalizable Vision-Language Reasoning (Hugging Face Papers / arXiv | 03/17/2026)

Policy, chips, capital, and power.

Industrial strategy, compute supply, export controls, and big-company positioning shaping the AI balance of power.

Mastercard keeps tabs on fraud with new foundation model

Mastercard has developed a large tabular model (an LTM as opposed to an LLM) that’s trained on transaction data rather than text or images to help it address security and authenticity issues in digital payments. The company has trained a foundation model on billions of card...

Mastercard keeps tabs on fraud with new foundation model matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security, foundation, llm.

- Primary signals: security, foundation, llm.

- Source context: AI News published or updated this item on 03/18/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own the-decoder.com

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own matters because it affects the policy, supply-chain, or security constraints around AI development, especially across europe.

- Primary signals: europe.

- Source context: The Decoder published or updated this item on 03/21/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says is one of the notable items tracked in today's digest.

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: Unknown source published or updated this item on 03/23/2026.

Product, model, and platform movement.

Software, model, deployment, and competitive stories with the strongest operator and market signal in this edition.

How we monitor internal coding agents for misalignment

OpenAI shares its approach to monitoring internal coding agents for misalignment.

Provides transparency on AI safety practices that can inform industry standards.

- Uses automated checks and human oversight to detect misaligned behavior

- Focuses on early detection during training

Introducing GPT-5.4 mini and nano

OpenAI releases smaller GPT-5.4 variants for broader deployment.

Smaller models enable edge deployment and lower latency applications.

- Mini and nano versions retain strong reasoning while reducing compute

- Targeted at developers needing lightweight LLMs

OpenAI to acquire Astral

OpenAI plans to acquire Astral to expand its AI capabilities.

Signals OpenAI's continued expansion via acquisitions to strengthen its product ecosystem.

- Acquisition may integrate Astral's technology into OpenAI's platform

- Potential to enhance model safety or tooling

13 Modern Reinforcement Learning Approaches for LLM Post-Training

13 Modern Reinforcement Learning Approaches for LLM Post-Training Turing Post

13 Modern Reinforcement Learning Approaches for LLM Post-Training matters because it signals momentum in llm, training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, training.

- Source context: Turing Post published or updated this item on 03/22/2026.

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code MarkTechPost

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/22/2026.

Differentiated source coverage.

Stories drawn from research blogs, first-party lab posts, practitioner newsletters, and selected technical outlets so the edition does not mirror the same headline across every source.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

OpenAI Model Craft: Parameter Golf

OpenAI Model Craft: Parameter Golf OpenAI

OpenAI Model Craft: Parameter Golf matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: OpenAI Research published or updated this item on 03/18/2026.

The persona selection model

The persona selection model Anthropic

The persona selection model matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents MarkTechPost

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/05/2026.

Visa prepares payment systems for AI agent-initiated transactions

Payments rely on a simple model: a person decides to buy something, and a bank or card network processes the transaction. That model is starting to change as Visa tests how AI agents can initiate payments. New work in the banking sector suggests that, in some cases, software...

Visa prepares payment systems for AI agent-initiated transactions matters because it signals momentum in agent, agents, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, model.

- Source context: AI News published or updated this item on 03/19/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says MIT Technology Review

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: MIT Tech Review AI published or updated this item on 03/17/2026.

Method, limitations, and results.

Paper summaries, methodology notes, limitations, and deep-dive bullets for the research items selected into the digest.

LumosX: Relate Any Identities with Their Attributes for Personalized Video Generation

TL;DR: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency. Recent advances in diffusion models have significantly improved text-to-video generation ,...

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies , enforcing disciplined intra-group cohesion and amplifying the separation between distinct subject clusters.

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Method signal: On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies , enforcing disciplined intra-group...

- Evidence to watch: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Approach: On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies ,...

- Result signal: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Community traction: Hugging Face Papers shows 14 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

ControlMLLM: Training-Free Visual Prompt Learning for Multimodal Large Language Models

TL;DR: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization. We observe that attention, as the core module of MLLMs, connects text prompt tokens and visual tokens,...

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Method signal: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Evidence to watch: The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Approach: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Result signal: The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

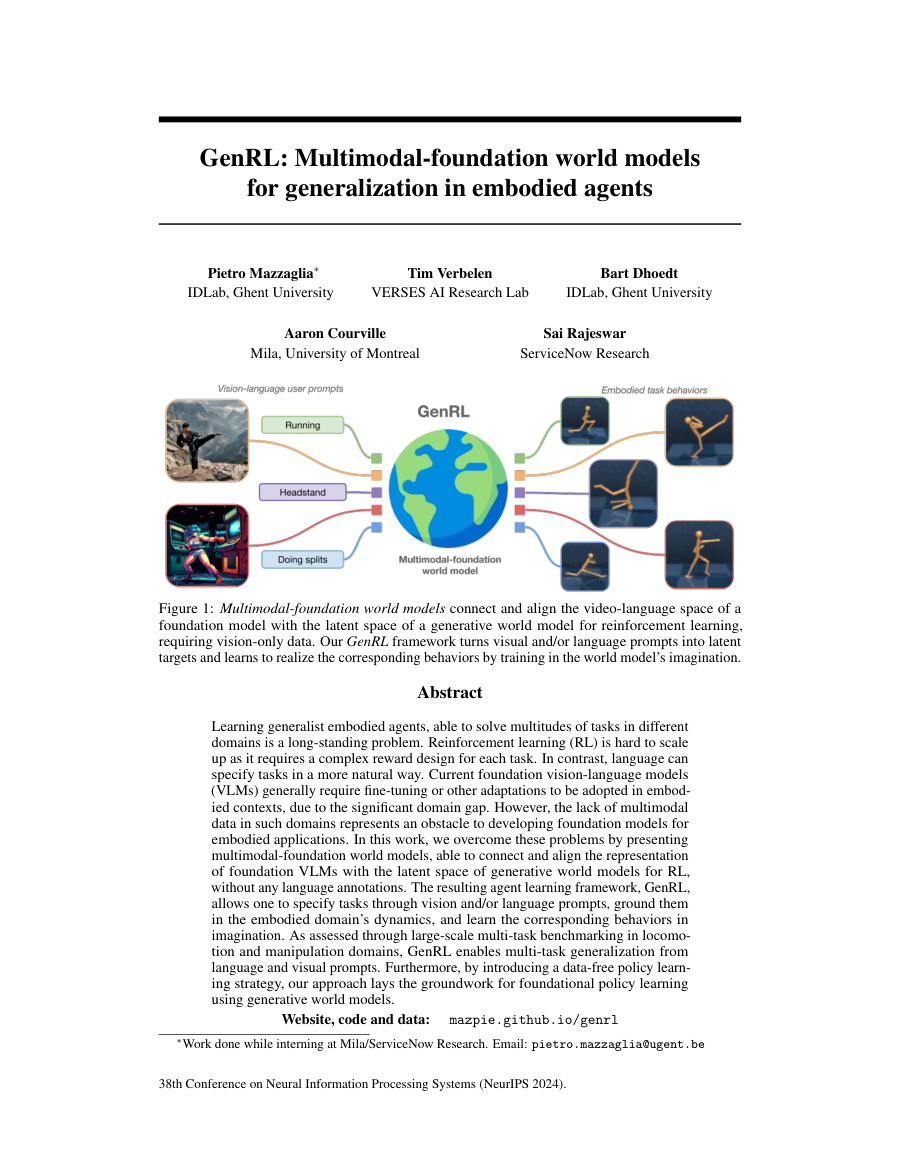

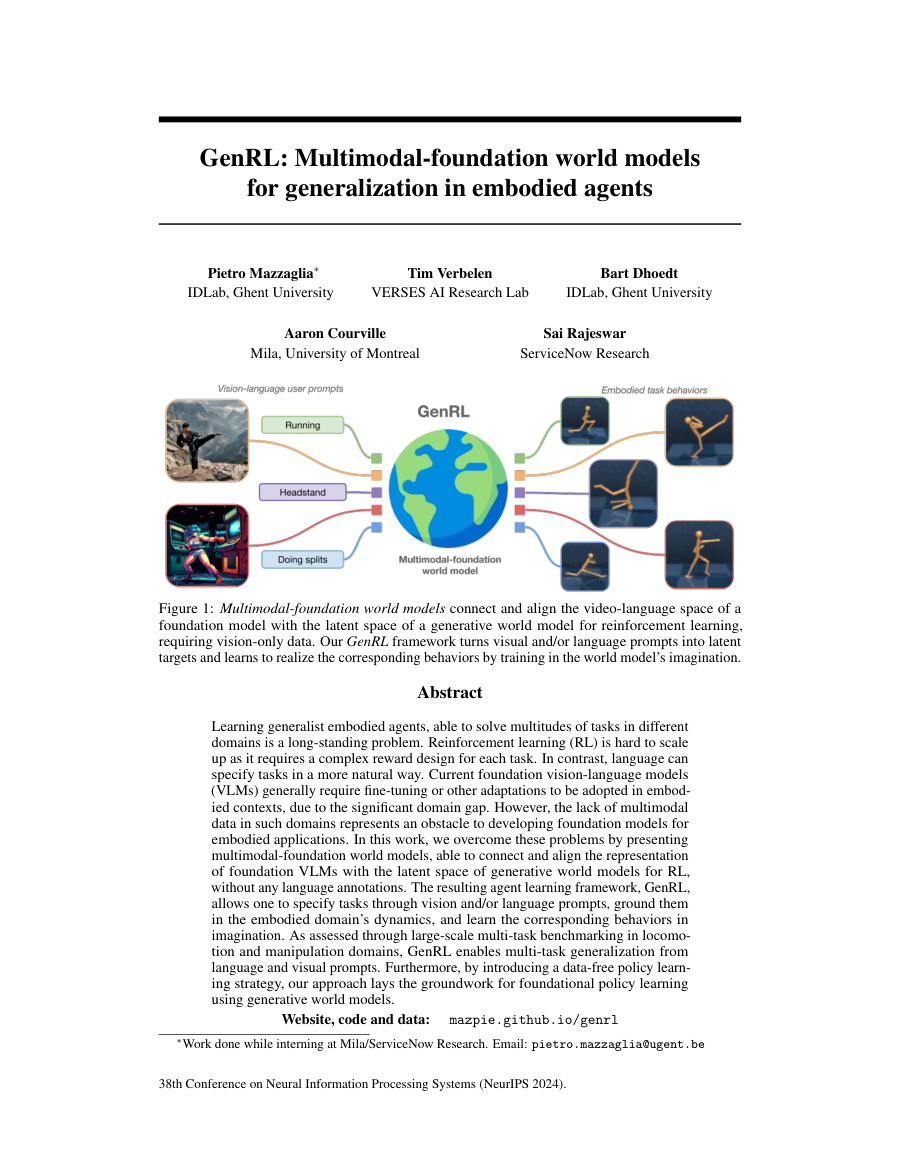

GenRL: Multimodal-foundation world models for generalization in embodied agents

TL;DR: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem. Reinforcement learning (RL) is hard to scale up as it requires a complex reward design for each task. In contrast, language can specify tasks in a more...

Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

Website, code and data: https://mazpie.github.io/genrl/

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

- Method signal: Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

- Evidence to watch: Website, code and data: https://mazpie.github.io/genrl/

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

- Approach: Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

- Result signal: Website, code and data: https://mazpie.github.io/genrl/

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

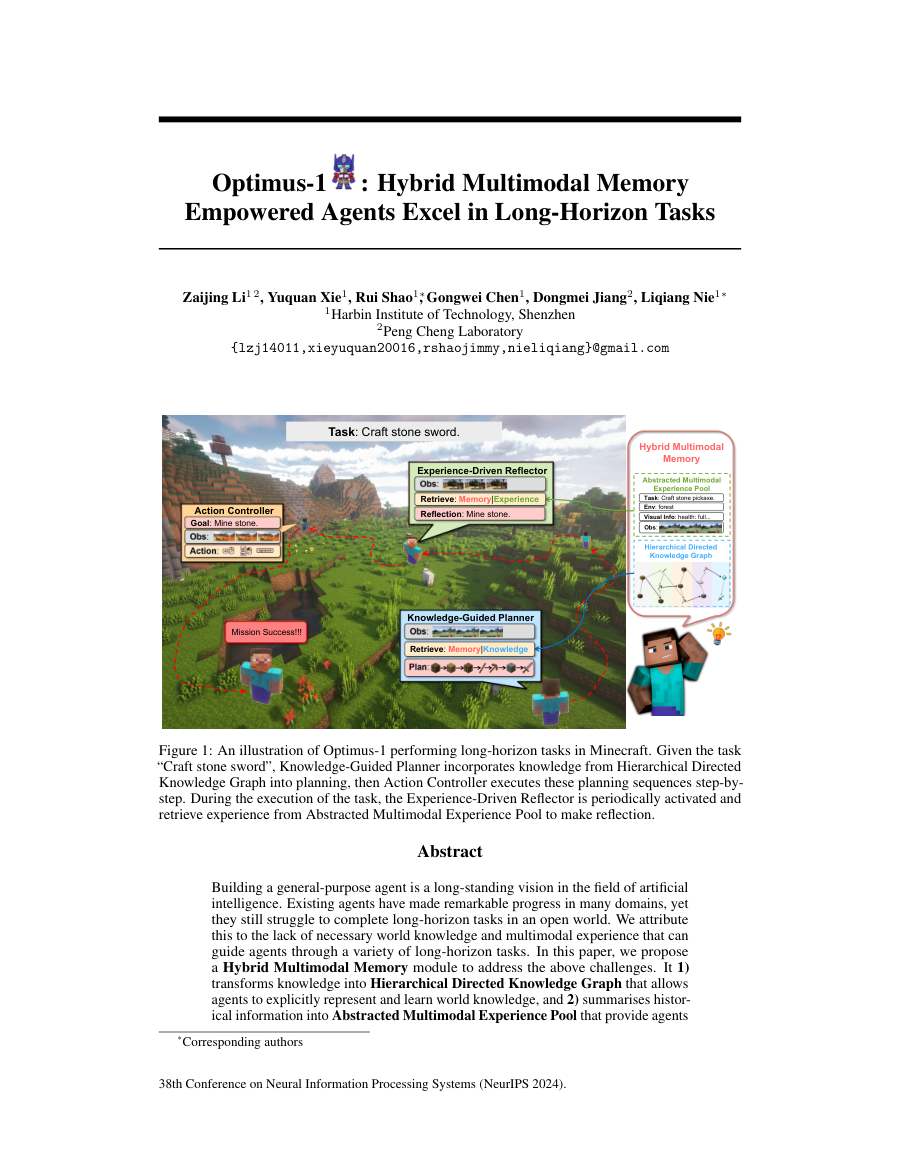

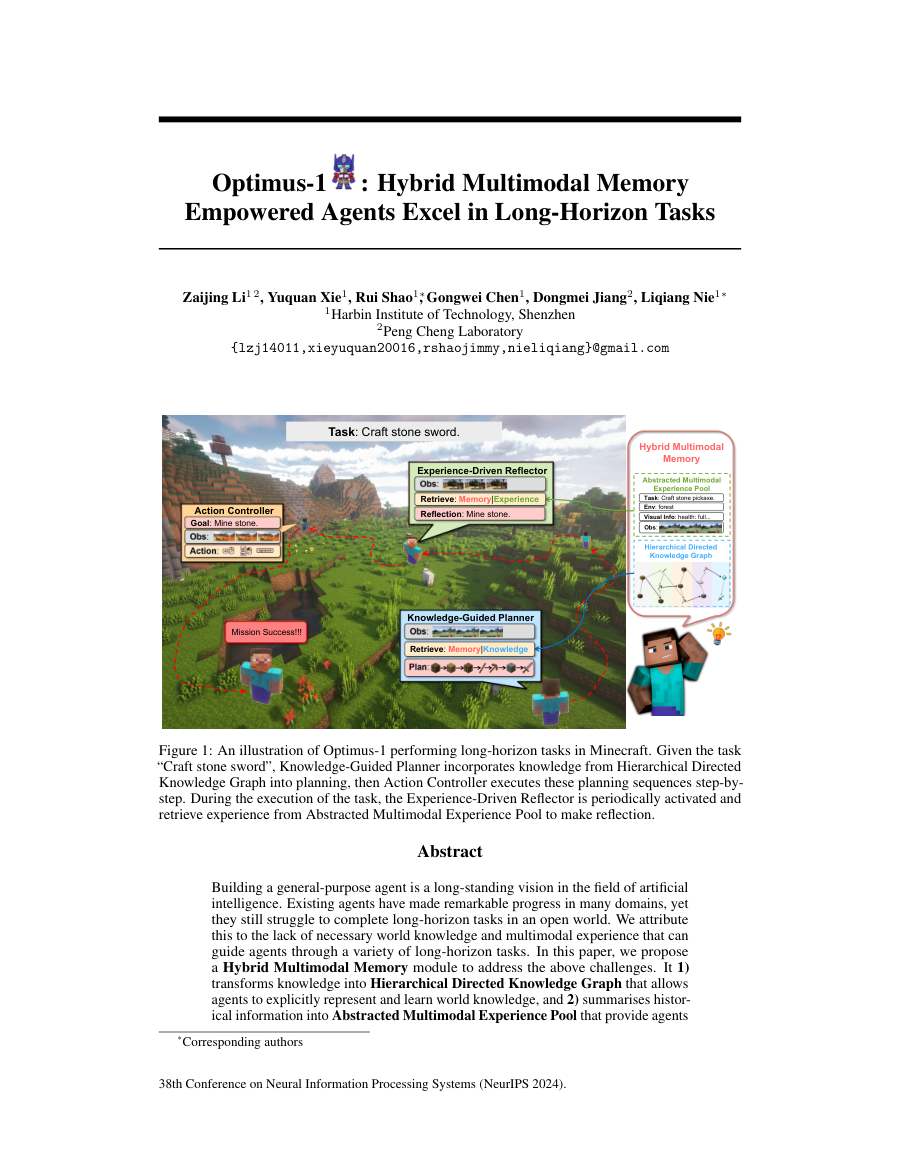

Optimus-1: Hybrid Multimodal Memory Empowered Agents Excel in Long-Horizon Tasks

TL;DR: Building a general-purpose agent is a long-standing vision in the field of artificial intelligence.

Building a general-purpose agent is a long-standing vision in the field of artificial intelligence. Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world. We attribute this to the lack of...

Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

- Method signal: In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

- Evidence to watch: Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

- Approach: In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

- Result signal: Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

Astrolabe: Steering Forward-Process Reinforcement Learning for Distilled Autoregressive Video Models

TL;DR: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with...

Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives. Distilled autoregressive (AR) video models...

To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

- Method signal: We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

- Evidence to watch: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

- Approach: We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

- Result signal: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming...

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Everything selected into the run.

The complete analyzed stream for the issue, useful when you want to scan the entire run instead of only the curated front page.

How we monitor internal coding agents for misalignment

OpenAI shares its approach to monitoring internal coding agents for misalignment.

Provides transparency on AI safety practices that can inform industry standards.

- Uses automated checks and human oversight to detect misaligned behavior

- Focuses on early detection during training

Introducing GPT-5.4 mini and nano

OpenAI releases smaller GPT-5.4 variants for broader deployment.

Smaller models enable edge deployment and lower latency applications.

- Mini and nano versions retain strong reasoning while reducing compute

- Targeted at developers needing lightweight LLMs

OpenAI to acquire Astral

OpenAI plans to acquire Astral to expand its AI capabilities.

Signals OpenAI's continued expansion via acquisitions to strengthen its product ecosystem.

- Acquisition may integrate Astral's technology into OpenAI's platform

- Potential to enhance model safety or tooling

13 Modern Reinforcement Learning Approaches for LLM Post-Training

13 Modern Reinforcement Learning Approaches for LLM Post-Training Turing Post

13 Modern Reinforcement Learning Approaches for LLM Post-Training matters because it signals momentum in llm, training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, training.

- Source context: Turing Post published or updated this item on 03/22/2026.

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code MarkTechPost

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/22/2026.

Visa prepares payment systems for AI agent-initiated transactions

Payments rely on a simple model: a person decides to buy something, and a bank or card network processes the transaction. That model is starting to change as Visa tests how AI agents can initiate payments. New work in the banking sector suggests that, in some cases, software...

Visa prepares payment systems for AI agent-initiated transactions matters because it signals momentum in agent, agents, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, model.

- Source context: AI News published or updated this item on 03/19/2026.

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents MarkTechPost

Google AI Releases a CLI Tool (gws) for Workspace APIs: Providing a Unified Interface for Humans and AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: MarkTechPost published or updated this item on 03/05/2026.

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning MarkTechPost

The ‘Bayesian’ Upgrade: Why Google AI’s New Teaching Method is the Key to LLM Reasoning matters because it signals momentum in llm, reasoning and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, reasoning.

- Source context: MarkTechPost published or updated this item on 03/09/2026.

Identifying Interactions at Scale for LLMs

Understanding the behavior of complex machine learning systems, particularly Large Language Models (LLMs), is a critical challenge in modern artificial intelligence. Interpretability research aims to make the decision-making process more transparent to model builders and...

Identifying Interactions at Scale for LLMs matters because it signals momentum in llm, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm, model.

- Source context: BAIR Blog published or updated this item on 03/13/2026.

7 Emerging Memory Architectures for AI Agents

7 Emerging Memory Architectures for AI Agents Turing Post

7 Emerging Memory Architectures for AI Agents matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Turing Post published or updated this item on 03/15/2026.

NTT DATA and NVIDIA bring enterprise AI factories to production scale

NTT DATA has announced an initiative to deliver NVIDIA-powered platforms designed to give organisations a repeatable, production-ready model for scaling AI. The offering integrates NVIDIA’s GPU-accelerated computing and high-performance networking with NVIDIA AI Enterprise...

NTT DATA and NVIDIA bring enterprise AI factories to production scale matters because it signals momentum in agent, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, model.

- Source context: AI News published or updated this item on 03/16/2026.

Trustpilot partners with AI companies as traditional search declines

Trustpilot is reported to be pursuing partnerships with large eCommerce companies as AI-driven shopping gains traction. In an interview with Bloomberg News [paywall], chief executive Adrian Blair said that AI agents acting on behalf of consumers require lots of information...

Trustpilot partners with AI companies as traditional search declines matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/17/2026.

NVIDIA wants enterprise AI agents safer to deploy

The NVIDIA Agent Toolkit is Jensen Huang’s answer to the question enterprises keep asking: how do we put AI agents to work without losing control of our data and our liability? Announced at GTC 2026 in San Jose on March 16, the NVIDIA Agent Toolkit is an open-source software...

NVIDIA wants enterprise AI agents safer to deploy matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: AI News published or updated this item on 03/19/2026.

Safely Deploying ML Models to Production: Four Controlled Strategies (A/B, Canary, Interleaved, Shadow Testing)

Safely Deploying ML Models to Production: Four Controlled Strategies (A/B, Canary, Interleaved, Shadow Testing) MarkTechPost

Safely Deploying ML Models to Production: Four Controlled Strategies (A/B, Canary, Interleaved, Shadow Testing) matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: MarkTechPost published or updated this item on 03/21/2026.

Build a Domain-Specific Embedding Model in Under a Day

A Blog post by NVIDIA on Hugging Face

Build a Domain-Specific Embedding Model in Under a Day matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Hugging Face Blog published or updated this item on 03/20/2026.

The persona selection model

The persona selection model Anthropic

The persona selection model matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

An update on our model deprecation commitments for Claude Opus 3

An update on our model deprecation commitments for Claude Opus 3 Anthropic

An update on our model deprecation commitments for Claude Opus 3 matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Anthropic Research published or updated this item on 02/25/2026.

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship Turing Post

Inside Reflection AI: The $20B Open-Model Startup That Has Yet to Ship matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: Turing Post published or updated this item on 03/08/2026.

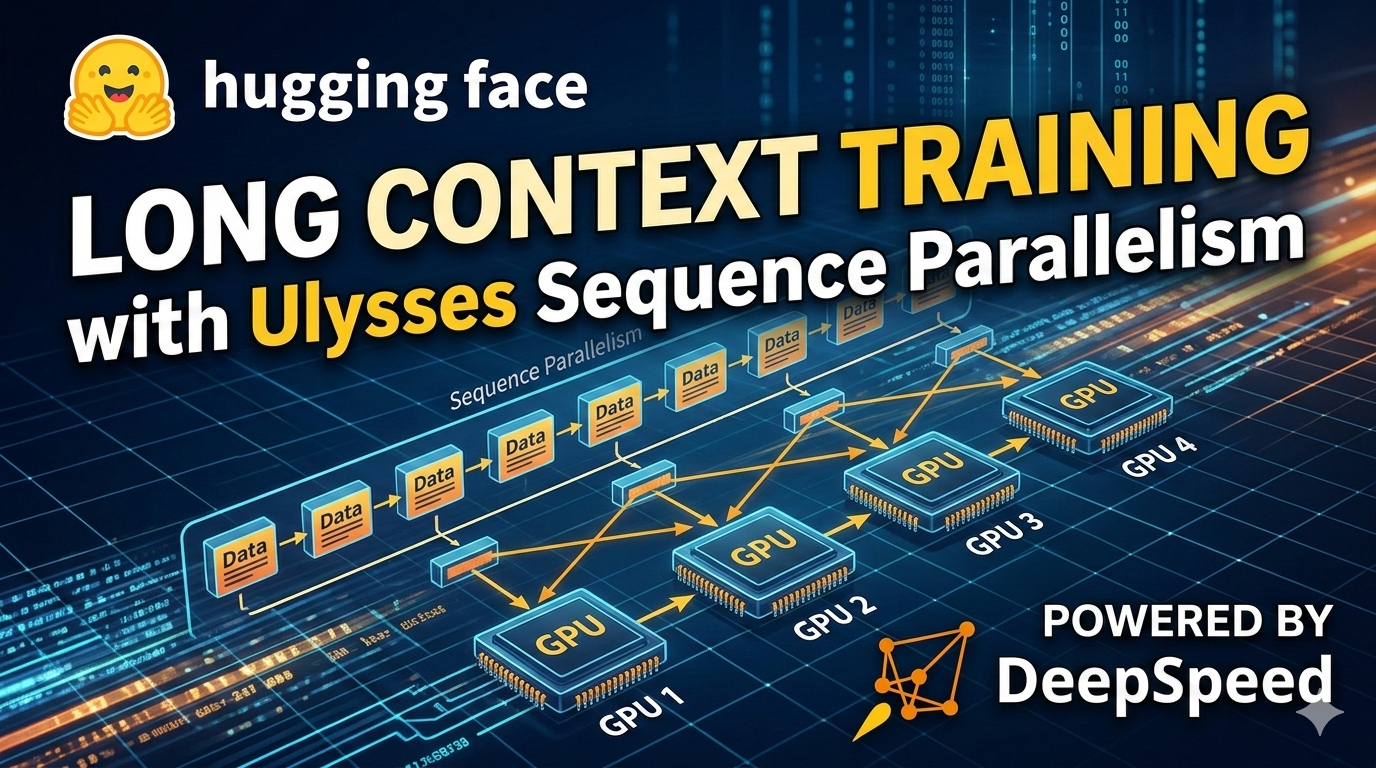

Ulysses Sequence Parallelism: Training with Million-Token Contexts

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Ulysses Sequence Parallelism: Training with Million-Token Contexts matters because it signals momentum in training and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: training.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

QuantumBlack: A Global Force in Agentic AI Transformation

QuantumBlack: A Global Force in Agentic AI Transformation AI Magazine

QuantumBlack: A Global Force in Agentic AI Transformation matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: AI Magazine published or updated this item on 03/16/2026.

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 Turing Post

FOD#144: New Scaling Law? What “Agentic Scaling" Is – Inside NVIDIA’s Biggest Idea at GTC 2026 matters because it signals momentum in agent and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent.

- Source context: Turing Post published or updated this item on 03/17/2026.

OpenAI Model Craft: Parameter Golf

OpenAI Model Craft: Parameter Golf OpenAI

OpenAI Model Craft: Parameter Golf matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: OpenAI Research published or updated this item on 03/18/2026.

Nvidia CEO Jensen Huang says he'd be "deeply alarmed" if a $500K developer spent less than $250K on AI tokens

Nvidia CEO Jensen Huang says he'd be "deeply alarmed" if a $500K developer spent less than $250K on AI tokens the-decoder.com

Nvidia CEO Jensen Huang says he'd be "deeply alarmed" if a $500K developer spent less than $250K on AI tokens matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: The Decoder published or updated this item on 03/21/2026.

This Week's Top Five Stories in AI

This Week's Top Five Stories in AI AI Magazine

This Week's Top Five Stories in AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/21/2026.

OpenAI is throwing everything into building a fully automated researcher

OpenAI is throwing everything into building a fully automated researcher MIT Technology Review

OpenAI is throwing everything into building a fully automated researcher matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/20/2026.

What's New in Mellea 0.4.0 + Granite Libraries Release

A Blog post by IBM Granite on Hugging Face

What's New in Mellea 0.4.0 + Granite Libraries Release matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/20/2026.

Anthropic Education Report: The AI Fluency Index

Anthropic Education Report: The AI Fluency Index Anthropic

Anthropic Education Report: The AI Fluency Index matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 02/23/2026.

Labor market impacts of AI: A new measure and early evidence

Labor market impacts of AI: A new measure and early evidence Anthropic

Labor market impacts of AI: A new measure and early evidence matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/05/2026.

LeRobot v0.5.0: Scaling Every Dimension

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

LeRobot v0.5.0: Scaling Every Dimension matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/09/2026.

OpenAI to acquire Promptfoo

OpenAI to acquire Promptfoo OpenAI

OpenAI to acquire Promptfoo matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/09/2026.

How Pokémon Go is giving delivery robots an inch-perfect view of the world

How Pokémon Go is giving delivery robots an inch-perfect view of the world MIT Technology Review

How Pokémon Go is giving delivery robots an inch-perfect view of the world matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/10/2026.

Introducing Storage Buckets on the Hugging Face Hub

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Introducing Storage Buckets on the Hugging Face Hub matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

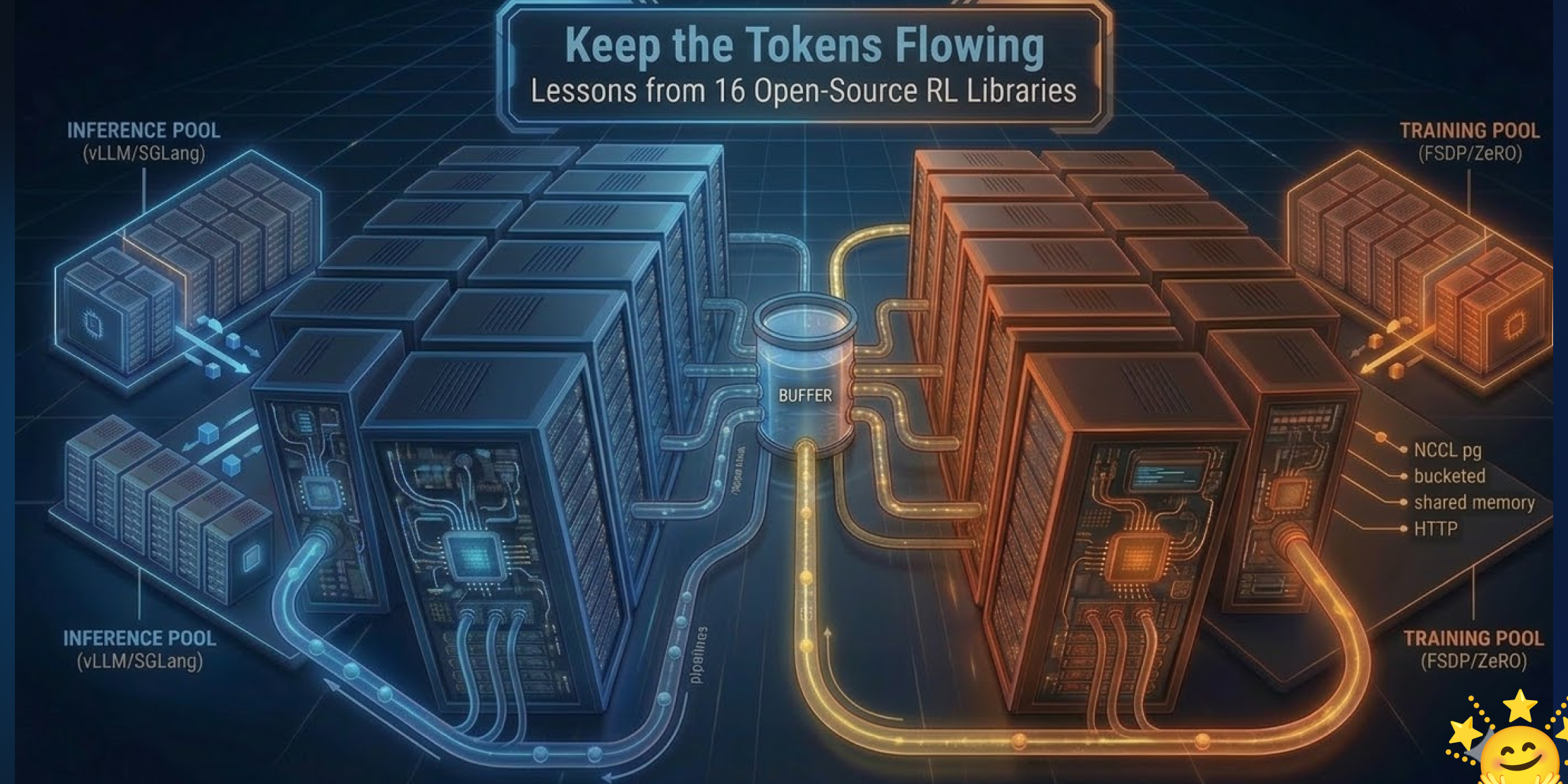

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

Keep the Tokens Flowing: Lessons from 16 Open-Source RL Libraries matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Hugging Face Blog published or updated this item on 03/10/2026.

Meta signs $27 billion cloud deal with Nebius in one of the largest AI infrastructure bets yet

Meta signs $27 billion cloud deal with Nebius in one of the largest AI infrastructure bets yet the-decoder.com

Meta signs $27 billion cloud deal with Nebius in one of the largest AI infrastructure bets yet matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: The Decoder published or updated this item on 03/16/2026.

Where OpenAI’s technology could show up in Iran

Where OpenAI’s technology could show up in Iran MIT Technology Review

Where OpenAI’s technology could show up in Iran matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/16/2026.

Goldman Sachs sees AI investment shift to data centres

Artificial intelligence investment is entering a more selective phase as companies and investors look beyond early excitement and focus on the data centre infrastructure required to run AI systems. Recent analysis from Goldman Sachs suggests the market is moving toward what...

Goldman Sachs sees AI investment shift to data centres matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/17/2026.

For effective AI, insurance needs to get its data house in order

A report from Autorek, a provider of AI solutions to the insurance industry has produced a report that describes operational drag in companies’ internal processes that not only affect overall efficiency but cause an impediment to the effective implementation of AI in...

For effective AI, insurance needs to get its data house in order matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/18/2026.

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces the-decoder.com

Google Labs turns Stitch into a full AI design platform that converts plain text into user interfaces matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: The Decoder published or updated this item on 03/18/2026.

How Apple's US$600bn US Investment Helps AI Infrastructure

How Apple's US$600bn US Investment Helps AI Infrastructure AI Magazine

How Apple's US$600bn US Investment Helps AI Infrastructure matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/18/2026.

Top 10: AI Platforms for Retail

Top 10: AI Platforms for Retail AI Magazine

Top 10: AI Platforms for Retail matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/18/2026.

Multiply raises $9.5m for self-learning ads, reports 300%-500% pipeline increase for B2B companies

Multiply raises $9.5m for self-learning ads, reports 300%-500% pipeline increase for B2B companies AI Magazine

Multiply raises $9.5m for self-learning ads, reports 300%-500% pipeline increase for B2B companies matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/19/2026.

Mastercard keeps tabs on fraud with new foundation model

Mastercard has developed a large tabular model (an LTM as opposed to an LLM) that’s trained on transaction data rather than text or images to help it address security and authenticity issues in digital payments. The company has trained a foundation model on billions of card...

Mastercard keeps tabs on fraud with new foundation model matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security, foundation, llm.

- Primary signals: security, foundation, llm.

- Source context: AI News published or updated this item on 03/18/2026.

A defense official reveals how AI chatbots could be used for targeting decisions

A defense official reveals how AI chatbots could be used for targeting decisions MIT Technology Review

A defense official reveals how AI chatbots could be used for targeting decisions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense, chatbot.

- Primary signals: defense, chatbot.

- Source context: MIT Tech Review AI published or updated this item on 03/12/2026.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own the-decoder.com

Europe's AI paradox is record adoption that funds foreign ecosystems instead of building its own matters because it affects the policy, supply-chain, or security constraints around AI development, especially across europe.

- Primary signals: europe.

- Source context: The Decoder published or updated this item on 03/21/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says is one of the notable items tracked in today's digest.

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: Unknown source published or updated this item on 03/23/2026.

US Treasury publishes AI risk Guidebook for financial institutions

The US Treasury has published several documents designed for the US financial services sector that suggest a structured approach to managing AI risks in operations and policy (see subheading ‘Resources and Downloads’ towards the bottom of the link). The CRI Financial Services...

US Treasury publishes AI risk Guidebook for financial institutions matters because it affects the policy, supply-chain, or security constraints around AI development, especially across policy.

- Primary signals: policy.

- Source context: AI News published or updated this item on 03/16/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

The Pentagon is planning for AI companies to train on classified data, defense official says

The Pentagon is planning for AI companies to train on classified data, defense official says MIT Technology Review

The Pentagon is planning for AI companies to train on classified data, defense official says matters because it affects the policy, supply-chain, or security constraints around AI development, especially across defense.

- Primary signals: defense.

- Source context: MIT Tech Review AI published or updated this item on 03/17/2026.

ControlMLLM: Training-Free Visual Prompt Learning for Multimodal Large Language Models

TL;DR: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization. We observe that attention, as the core module of MLLMs, connects text prompt tokens and visual tokens,...

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Method signal: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Evidence to watch: The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Approach: In this work, we propose a training-free method to inject visual prompts into Multimodal Large Language Models (MLLMs) through learnable latent variable optimization.

- Result signal: The results demonstrate that our method exhibits out-of-domain generalization and interpretability.

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

GenRL: Multimodal-foundation world models for generalization in embodied agents

TL;DR: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem. Reinforcement learning (RL) is hard to scale up as it requires a complex reward design for each task. In contrast, language can specify tasks in a more...

Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

Website, code and data: https://mazpie.github.io/genrl/

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

- Method signal: Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

- Evidence to watch: Website, code and data: https://mazpie.github.io/genrl/

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: Learning generalist embodied agents, able to solve multitudes of tasks in different domains is a long-standing problem.

- Approach: Furthermore, by introducing a data-free policy learning strategy, our approach lays the groundwork for foundational policy learning using generative world models.

- Result signal: Website, code and data: https://mazpie.github.io/genrl/

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

Optimus-1: Hybrid Multimodal Memory Empowered Agents Excel in Long-Horizon Tasks

TL;DR: Building a general-purpose agent is a long-standing vision in the field of artificial intelligence.

Building a general-purpose agent is a long-standing vision in the field of artificial intelligence. Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world. We attribute this to the lack of...

Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

- Problem framing: Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

- Method signal: In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

- Evidence to watch: Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from NeurIPS 2024.

- Problem: Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world.

- Approach: In this paper, we propose a Hybrid Multimodal Memory module to address the above challenges.

- Result signal: Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon task benchmarks, and exhibits near human-level performance on many tasks.

- Conference context: NeurIPS 2024 Main Conference Track

- The abstract is promising, but we still need to inspect the full paper for compute cost, implementation complexity, and how broadly the gains transfer beyond the reported benchmarks.

LumosX: Relate Any Identities with Their Attributes for Personalized Video Generation

TL;DR: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency. Recent advances in diffusion models have significantly improved text-to-video generation ,...

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies , enforcing disciplined intra-group cohesion and amplifying the separation between distinct subject clusters.

LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Method signal: On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies , enforcing disciplined intra-group...

- Evidence to watch: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Approach: On the modeling side, Relational Self-Attention and Relational Cross-Attention intertwine position-aware embeddings with refined attention dynamics to inscribe explicit subject-attribute dependencies ,...

- Result signal: LumosX framework enhances text-to-video generation through relational attention mechanisms and structured data pipelines for improved face-attribute alignment and subject consistency.

- Community traction: Hugging Face Papers shows 14 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Astrolabe: Steering Forward-Process Reinforcement Learning for Distilled Autoregressive Video Models

TL;DR: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with...

Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives. Distilled autoregressive (AR) video models...

To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

- Method signal: We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

- Evidence to watch: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming training with multi-reward objectives.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: To overcome existing bottlenecks, we introduce a forward-process RL formulation based on negative-aware fine-tuning .

- Approach: We present Astrolabe, an efficient online RL framework tailored for distilled AR models.

- Result signal: Astrolabe is an efficient online reinforcement learning framework for distilled autoregressive video models that improves generation quality through forward-process RL formulation and streaming...

- Community traction: Hugging Face Papers shows 21 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

HopChain: Multi-Hop Data Synthesis for Generalizable Vision-Language Reasoning

TL;DR: HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks. VLMs show strong multimodal capabilities , but they still struggle with fine-grained vision-language...

HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

VLMs show strong multimodal capabilities , but they still struggle with fine-grained vision-language reasoning.

HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

- Method signal: VLMs show strong multimodal capabilities , but they still struggle with fine-grained vision-language reasoning.

- Evidence to watch: HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

- Approach: VLMs show strong multimodal capabilities , but they still struggle with fine-grained vision-language reasoning.

- Result signal: HopChain is a scalable framework that generates multi-hop vision-language reasoning data to enhance VLMs' long-chain reasoning capabilities across diverse benchmarks.

- Community traction: Hugging Face Papers shows 60 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

A Subgoal-driven Framework for Improving Long-Horizon LLM Agents

TL;DR: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly...

LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success rates over existing proprietary and open...

LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success rates over existing...

LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success rates over existing proprietary and open models.

LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success rates over existing proprietary and open models.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success...

- Method signal: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success rates...

- Evidence to watch: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals, significantly improving success...

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals,...

- Approach: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward signals,...

- Result signal: LLM-based agents for web navigation face challenges in long-horizon planning and reinforcement learning fine-tuning, which are addressed through subgoal decomposition and milestone-based reward...

- Community traction: Hugging Face Papers shows 8 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

How Well Does Generative Recommendation Generalize?

TL;DR: Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive...

Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive combination. A widely held hypothesis for why generative...

Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive combination.

We propose a simple memorization -aware indicator that adaptively combines them on a per-instance basis, leading to improved overall recommendation performance .

Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive combination.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive combination.

- Method signal: We propose a simple memorization -aware indicator that adaptively combines them on a per-instance basis, leading to improved overall recommendation performance .

- Evidence to watch: Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through adaptive combination.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance through...

- Approach: We propose a simple memorization -aware indicator that adaptively combines them on a per-instance basis, leading to improved overall recommendation performance .

- Result signal: Generative recommendation models excel at generalization tasks while item ID-based models perform better at memorization, with a complementary approach showing improved recommendation performance...

- Community traction: Hugging Face Papers shows 8 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Issue routing and exits.

The daily edition stays aligned with the rest of the site while keeping the full issue readable end to end.

Navigation

Public desks

Issue

- 03/22/2026

- 57 total analyzed

- Readable issue route