Daily Edition

The expanded edition keeps the full analyst notes, paper breakdowns, geopolitical framing, and the complete feed selected into this run.

Topic of the day.

A dedicated daily topic chosen from the strongest signals in the run, with TL;DR, why-now framing, and a fuller analyst read.

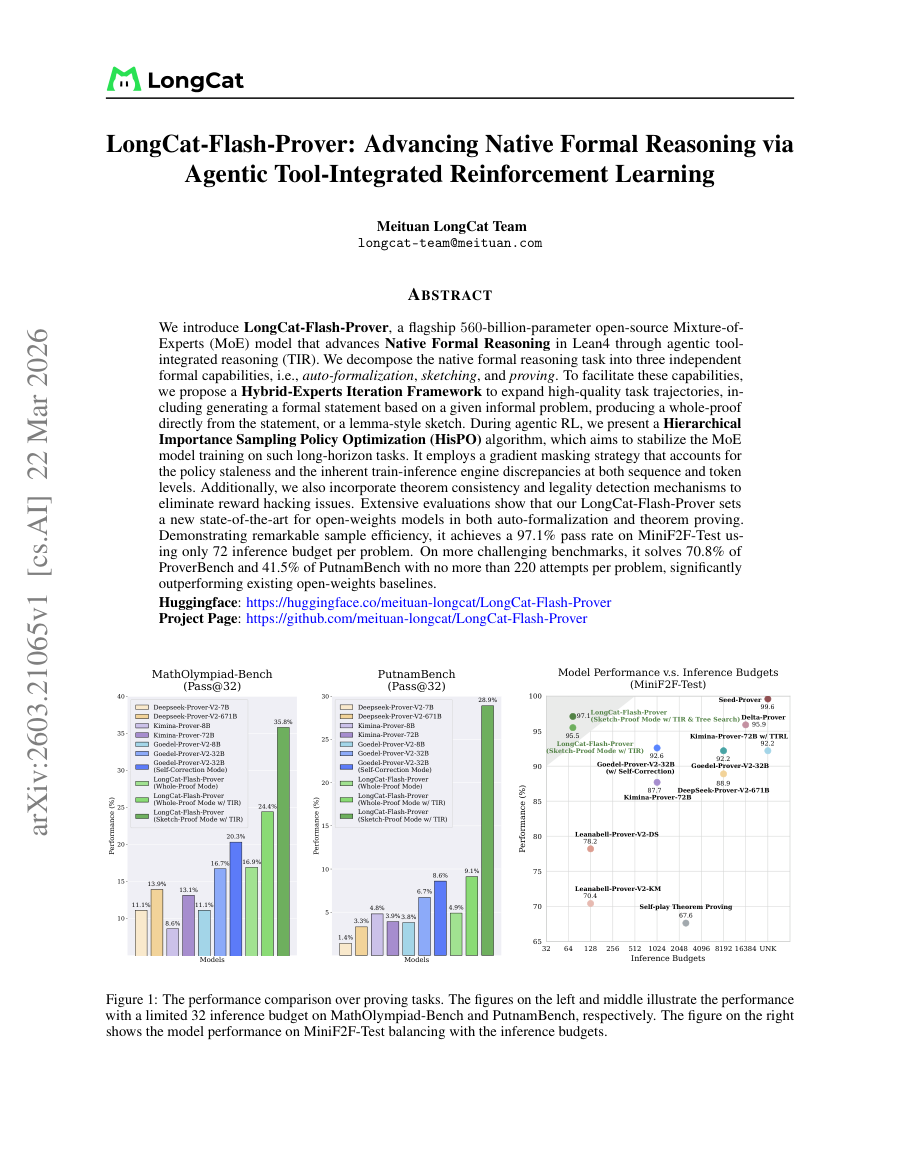

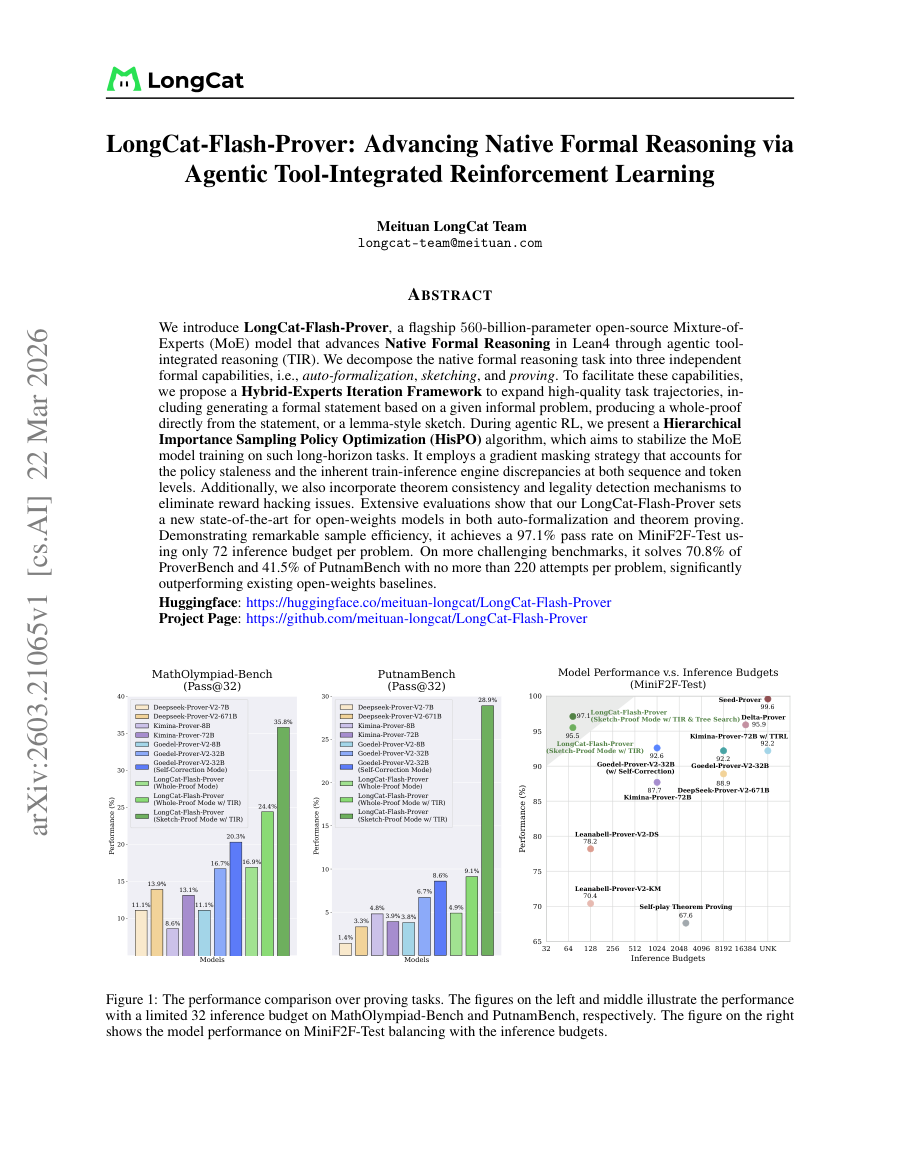

LongCat-Flash-Prover: Advancing Native Formal Reasoning via Agentic Tool-Integrated Reinforcement Learning

TL;DR: A 560B-parameter MoE model achieves new SOTA in Lean4 formal reasoning via tool-integrated reasoning and hierarchical policy optimization.

Why now: Formal verification is gaining traction for AI safety and software reliability, driving demand for stronger automated theorem provers.

LongCat-Flash-Prover demonstrates that scaling Mixture-of-Experts models with agentic tool integration can substantially improve theorem proving performance while maintaining sample efficiency. Its hierarchical policy optimization addresses training instability common in long-horizon RL tasks, and the hybrid iteration framework enriches training data via auto-formalization, sketching, and proving pathways.

- 560B-parameter MoE model with tool-integrated reasoning (TIR)

- Hybrid-Experts Iteration Framework expands

- A New Framework for Evaluating Voice Agents (EVA) (Hugging Face Blog | 03/24/2026)

- Xiaomi launches three MiMo AI models to power agents, robots, and voice (The Decoder | 03/22/2026)

- Holotron-12B - High Throughput Computer Use Agent (Hugging Face Blog | 03/17/2026)

Policy, chips, capital, and power.

Industrial strategy, compute supply, export controls, and big-company positioning shaping the AI balance of power.

Mastercard keeps tabs on fraud with new foundation model

Mastercard has developed a large tabular model (an LTM as opposed to an LLM) that’s trained on transaction data rather than text or images to help it address security and authenticity issues in digital payments. The company has trained a foundation model on billions of card...

Mastercard keeps tabs on fraud with new foundation model matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security, foundation, llm.

- Primary signals: security, foundation, llm.

- Source context: AI News published or updated this item on 03/18/2026.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

Product, model, and platform movement.

Software, model, deployment, and competitive stories with the strongest operator and market signal in this edition.

A New Framework for Evaluating Voice Agents (EVA)

A Blog post by ServiceNow-AI on Hugging Face

A New Framework for Evaluating Voice Agents (EVA) matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Hugging Face Blog published or updated this item on 03/24/2026.

Xiaomi launches three MiMo AI models to power agents, robots, and voice

Xiaomi launches three MiMo AI models to power agents, robots, and voice the-decoder.com

Xiaomi launches three MiMo AI models to power agents, robots, and voice matters because it signals momentum in agent, agents, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, model.

- Source context: The Decoder published or updated this item on 03/22/2026.

Introducing our Science Blog

Introducing our Science Blog Anthropic

Introducing our Science Blog matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/24/2026.

Vibe physics: The AI grad student

Vibe physics: The AI grad student Anthropic

Vibe physics: The AI grad student matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/24/2026.

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools Turing Post

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools matters because it signals momentum in agent, benchmark and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, benchmark.

- Source context: Turing Post published or updated this item on 02/27/2026.

Differentiated source coverage.

Stories drawn from research blogs, first-party lab posts, practitioner newsletters, and selected technical outlets so the edition does not mirror the same headline across every source.

A New Framework for Evaluating Voice Agents (EVA)

A Blog post by ServiceNow-AI on Hugging Face

A New Framework for Evaluating Voice Agents (EVA) matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Hugging Face Blog published or updated this item on 03/24/2026.

Creating with Sora safely

Creating with Sora safely OpenAI

Creating with Sora safely matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/23/2026.

Long-running Claude for scientific computing

Long-running Claude for scientific computing Anthropic

Long-running Claude for scientific computing matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/23/2026.

How BM25 and RAG Retrieve Information Differently?

How BM25 and RAG Retrieve Information Differently? MarkTechPost

How BM25 and RAG Retrieve Information Differently? matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MarkTechPost published or updated this item on 03/23/2026.

Palantir AI to support UK finance operations

UK authorities believe improving efficiency across national finance operations requires applying AI platforms from vendors like Palantir. The country’s financial regulator, the FCA, has initiated a project leveraging AI to identify illicit activities. The FCA is currently...

Palantir AI to support UK finance operations matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/23/2026.

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps?

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps? AI Magazine

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps? matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/17/2026.

The Bay Area’s animal welfare movement wants to recruit AI

The Bay Area’s animal welfare movement wants to recruit AI MIT Technology Review

The Bay Area’s animal welfare movement wants to recruit AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/23/2026.

The Org Age of AI

The Org Age of AI Turing Post

The Org Age of AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Turing Post published or updated this item on 03/22/2026.

Method, limitations, and results.

Paper summaries, methodology notes, limitations, and deep-dive bullets for the research items selected into the digest.

LongCat-Flash-Prover: Advancing Native Formal Reasoning via Agentic Tool-Integrated Reinforcement Learning

TL;DR: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon...

A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks. We introduce LongCat-Flash-Prover, a flagship...

A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks.

We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks.

- Method signal: We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

- Evidence to watch: Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on...

- Approach: We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

- Result signal: Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

- Community traction: Hugging Face Papers shows 50 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Group3D: MLLM-Driven Semantic Grouping for Open-Vocabulary 3D Object Detection

TL;DR: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free...

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings. Open-vocabulary 3D object detection aims to localize...

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

- Method signal: We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

- Evidence to watch: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and...

- Approach: We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

- Result signal: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known...

- Community traction: Hugging Face Papers shows 15 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Speed by Simplicity: A Single-Stream Architecture for Fast Audio-Video Generative Foundation Model

TL;DR: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with...

daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference capabilities. We present...

daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference capabilities.

We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream Transformer that processes text, video, and audio within a unified token sequence via self-attention only.

The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference...

- Method signal: We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream Transformer that processes text, video,...

- Evidence to watch: The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content...

- Approach: We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream...

- Result signal: The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

- Community traction: Hugging Face Papers shows 28 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

VideoDetective: Clue Hunting via both Extrinsic Query and Intrinsic Relevance for Long Video Understanding

TL;DR: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops. Long video understanding remains challenging for multimodal large language models...

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Method signal: To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

- Evidence to watch: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Approach: To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

- Result signal: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Community traction: Hugging Face Papers shows 33 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

mSFT: Addressing Dataset Mixtures Overfiting Heterogeneously in Multi-task SFT

TL;DR: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets. Current language model training commonly applies multi-task Supervised...

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest overfitting sub-dataset, and reverts to that specific optimal checkpoint before continuing.

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Method signal: To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest overfitting sub-dataset, and reverts to...

- Evidence to watch: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Approach: To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest...

- Result signal: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Community traction: Hugging Face Papers shows 19 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Everything selected into the run.

The complete analyzed stream for the issue, useful when you want to scan the entire run instead of only the curated front page.

A New Framework for Evaluating Voice Agents (EVA)

A Blog post by ServiceNow-AI on Hugging Face

A New Framework for Evaluating Voice Agents (EVA) matters because it signals momentum in agent, agents and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents.

- Source context: Hugging Face Blog published or updated this item on 03/24/2026.

Xiaomi launches three MiMo AI models to power agents, robots, and voice

Xiaomi launches three MiMo AI models to power agents, robots, and voice the-decoder.com

Xiaomi launches three MiMo AI models to power agents, robots, and voice matters because it signals momentum in agent, agents, model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, agents, model.

- Source context: The Decoder published or updated this item on 03/22/2026.

Introducing our Science Blog

Introducing our Science Blog Anthropic

Introducing our Science Blog matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/24/2026.

Vibe physics: The AI grad student

Vibe physics: The AI grad student Anthropic

Vibe physics: The AI grad student matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/24/2026.

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools Turing Post

2025 Coding Agent Benchmark: Real-World Test of 15 AI Developer Tools matters because it signals momentum in agent, benchmark and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: agent, benchmark.

- Source context: Turing Post published or updated this item on 02/27/2026.

OpenAI publishes a prompting playbook that helps designers get better frontend results from GPT-5.4

OpenAI publishes a prompting playbook that helps designers get better frontend results from GPT-5.4 the-decoder.com

OpenAI publishes a prompting playbook that helps designers get better frontend results from GPT-5.4 matters because it signals momentum in gpt and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: gpt.

- Source context: The Decoder published or updated this item on 03/22/2026.

Creating with Sora safely

Creating with Sora safely OpenAI

Creating with Sora safely matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: OpenAI Research published or updated this item on 03/23/2026.

How BM25 and RAG Retrieve Information Differently?

How BM25 and RAG Retrieve Information Differently? MarkTechPost

How BM25 and RAG Retrieve Information Differently? matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MarkTechPost published or updated this item on 03/23/2026.

Long-running Claude for scientific computing

Long-running Claude for scientific computing Anthropic

Long-running Claude for scientific computing matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Anthropic Research published or updated this item on 03/23/2026.

Palantir AI to support UK finance operations

UK authorities believe improving efficiency across national finance operations requires applying AI platforms from vendors like Palantir. The country’s financial regulator, the FCA, has initiated a project leveraging AI to identify illicit activities. The FCA is currently...

Palantir AI to support UK finance operations matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI News published or updated this item on 03/23/2026.

The Bay Area’s animal welfare movement wants to recruit AI

The Bay Area’s animal welfare movement wants to recruit AI MIT Technology Review

The Bay Area’s animal welfare movement wants to recruit AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/23/2026.

The hardest question to answer about AI-fueled delusions

The hardest question to answer about AI-fueled delusions MIT Technology Review

The hardest question to answer about AI-fueled delusions matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: MIT Tech Review AI published or updated this item on 03/23/2026.

A Coding Implementation to Build an Uncertainty-Aware LLM System with Confidence Estimation, Self-Evaluation, and Automatic Web Research

A Coding Implementation to Build an Uncertainty-Aware LLM System with Confidence Estimation, Self-Evaluation, and Automatic Web Research MarkTechPost

A Coding Implementation to Build an Uncertainty-Aware LLM System with Confidence Estimation, Self-Evaluation, and Automatic Web Research matters because it signals momentum in llm and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: llm.

- Source context: MarkTechPost published or updated this item on 03/21/2026.

Cursor quietly built its new coding model on top of Chinese open-source Kimi K2.5

Cursor quietly built its new coding model on top of Chinese open-source Kimi K2.5 the-decoder.com

Cursor quietly built its new coding model on top of Chinese open-source Kimi K2.5 matters because it signals momentum in model and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: model.

- Source context: The Decoder published or updated this item on 03/21/2026.

The Org Age of AI

The Org Age of AI Turing Post

The Org Age of AI matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: Turing Post published or updated this item on 03/22/2026.

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps?

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps? AI Magazine

Could Bumble’s Bee AI End 'Swiping Fatigue' on Dating Apps? matters because it signals momentum in the broader AI ecosystem and may shift how teams prioritize models, tooling, or deployment choices.

- Primary signals: AI platforms and product execution.

- Source context: AI Magazine published or updated this item on 03/17/2026.

Mastercard keeps tabs on fraud with new foundation model

Mastercard has developed a large tabular model (an LTM as opposed to an LLM) that’s trained on transaction data rather than text or images to help it address security and authenticity issues in digital payments. The company has trained a foundation model on billions of card...

Mastercard keeps tabs on fraud with new foundation model matters because it affects the policy, supply-chain, or security constraints around AI development, especially across security, foundation, llm.

- Primary signals: security, foundation, llm.

- Source context: AI News published or updated this item on 03/18/2026.

Holotron-12B - High Throughput Computer Use Agent

A Blog post by H company on Hugging Face

Holotron-12B - High Throughput Computer Use Agent matters because it affects the policy, supply-chain, or security constraints around AI development, especially across compute, agent.

- Primary signals: compute, agent.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

State of Open Source on Hugging Face: Spring 2026

A Blog post by Hugging Face on Hugging Face

State of Open Source on Hugging Face: Spring 2026 matters because it affects the policy, supply-chain, or security constraints around AI development, especially across state.

- Primary signals: state.

- Source context: Hugging Face Blog published or updated this item on 03/17/2026.

LongCat-Flash-Prover: Advancing Native Formal Reasoning via Agentic Tool-Integrated Reinforcement Learning

TL;DR: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon...

A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks. We introduce LongCat-Flash-Prover, a flagship...

A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks.

We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on long-horizon tasks.

- Method signal: We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

- Evidence to watch: Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: A 560-billion-parameter Mixture-of-Experts model advances formal reasoning in Lean4 through tool-integrated reasoning with a hybrid framework and hierarchical policy optimization for stable training on...

- Approach: We introduce LongCat-Flash-Prover, a flagship 560-billion-parameter open-source Mixture-of- Experts (MoE) model that advances Native Formal Reasoning in Lean4 through agentic tool-integrated reasoning (TIR).

- Result signal: Extensive evaluations show that our LongCat-Flash-Prover sets a new state-of-the-art for open-weights models in both auto-formalization and theorem proving .

- Community traction: Hugging Face Papers shows 50 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Group3D: MLLM-Driven Semantic Grouping for Open-Vocabulary 3D Object Detection

TL;DR: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free...

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings. Open-vocabulary 3D object detection aims to localize...

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

- Problem framing: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

- Method signal: We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

- Evidence to watch: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and pose-free settings.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known and...

- Approach: We propose Group3D, a multi-view open-vocabulary 3D detection framework that integrates semantic constraints directly into the instance construction process.

- Result signal: Group3D is a multi-view open-vocabulary 3D detection framework that integrates semantic constraints into instance construction through semantic compatibility groups, improving accuracy in pose-known...

- Community traction: Hugging Face Papers shows 15 votes for this paper.

- The reported improvement still needs a closer check on benchmark scope, ablations, and whether the method keeps working outside the authors' evaluation setup.

Speed by Simplicity: A Single-Stream Architecture for Fast Audio-Video Generative Foundation Model

TL;DR: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with...

daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference capabilities. We present...

daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference capabilities.

We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream Transformer that processes text, video, and audio within a unified token sequence via self-attention only.

The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content generation with efficient inference...

- Method signal: We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream Transformer that processes text, video,...

- Evidence to watch: The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: daVinci-MagiHuman is an open-source audio-video generative model that synchronizes text, video, and audio through a single-stream Transformer architecture, achieving high-quality human-centric content...

- Approach: We present daVinci-MagiHuman, an open-source audio-video generative foundation model for human-centric generation. daVinci-MagiHuman jointly generates synchronized video and audio using a single-stream...

- Result signal: The model is particularly strong in human-centric scenarios, producing expressive facial performance, natural speech-expression coordination, realistic body motion, and precise audio-video synchronization.

- Community traction: Hugging Face Papers shows 28 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

VideoDetective: Clue Hunting via both Extrinsic Query and Intrinsic Relevance for Long Video Understanding

TL;DR: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops. Long video understanding remains challenging for multimodal large language models...

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Method signal: To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

- Evidence to watch: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Approach: To address this, we propose VideoDetective, a framework that integrates query-to-segment relevance and inter-segment affinity for effective clue hunting in long- video question answering .

- Result signal: VideoDetective framework improves long video understanding by integrating query-to-segment relevance and inter-segment affinity through visual-temporal graphs and hypothesis verification loops.

- Community traction: Hugging Face Papers shows 33 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

mSFT: Addressing Dataset Mixtures Overfiting Heterogeneously in Multi-task SFT

TL;DR: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets. Current language model training commonly applies multi-task Supervised...

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest overfitting sub-dataset, and reverts to that specific optimal checkpoint before continuing.

Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

- Problem framing: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Method signal: To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest overfitting sub-dataset, and reverts to...

- Evidence to watch: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Read-through priority: the PDF is available, so this is a good candidate for checking tables, ablations, and scaling tradeoffs beyond the abstract from Hugging Face Papers / arXiv.

- Problem: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Approach: To address this, we introduce m SFT , an iterative, overfitting -aware search algorithm for multi-task data mixtures . m SFT trains the model on an active mixture, identifies and excludes the earliest...

- Result signal: Multi-task supervised fine-tuning with heterogeneous learning dynamics benefits from an iterative overfitting-aware search algorithm that improves performance across diverse datasets and compute budgets.

- Community traction: Hugging Face Papers shows 19 votes for this paper.

- The summary does not include concrete numbers, so the practical size of the gain and the tradeoff against latency or data cost are still unclear.

Issue routing and exits.

The daily edition stays aligned with the rest of the site while keeping the full issue readable end to end.

Navigation

Public desks

Issue

- 03/24/2026

- 24 total analyzed

- Readable issue route